Without a clean and crawlable website structure, you’re dead in the water SEO-wise. For example, if you don’t have a solid SEO foundation, you can end up providing serious obstacles for both users and search engines. And that’s never a good idea. And even if you have clean and crawlable structure, problems with various SEO directives can throw a wrench into the situation. And those problems can lie beneath the surface just waiting to kill your SEO efforts. That’s one of the reasons I’ve always believed that a thorough technical audit is the most powerful deliverables in all of SEO.

The Power of Technical SEO Audits: Crawls + Manual Audits = Win

“What lies beneath” can be scary. Really scary… The reality for SEO is that what looks fine on the surface may have serious flaws. And finding those hidden problems and rectifying them as quickly as possible can help turn a site around SEO-wise.

When performing an SEO audit, it’s incredibly important to manually dig through a site to see what’s going on. That’s a given. But it’s also important to crawl the site to pick up potential land mines. In my opinion, the combination of both a manual audit and extensive crawl analysis can help you uncover problems that may be inhibiting the performance of the site SEO-wise. And both might help you surface dangerous optical illusions, which is the core topic of my post today.

Uncovering Optical SEO Illusions

Optical illusions can be fun to check out, but they aren’t so fun when they can negatively impact your business. When your eyes play tricks on you, and your website takes a Google hit due to that illusion, it’s not so fun.

The word “technical” in technical SEO is important to highlight. If your code is even one character off, it could have a big impact on your site SEO-wise. For example, if you implement the meta robots tag on a site with 500,000 pages, then the wrong directives could wreak havoc on your site. Or maybe you are providing urls in multiple languages using hreflang, and those additional urls are adding 30,000 urls to your site. You would definitely want to make sure those hreflang tags are set up correctly.

But what if you thought those directives and tags were set up perfectly when in fact, they aren’t set up correctly. The look right at first glance, but there’s something just not right…

That’s the focus of this post today, and it can happen easier than you think. I’ll walk through several examples of SEO optical illusions, and then explain how to avoid or pick up those illusions.

Abracadabra, let’s begin. :)

Three Examples of Technical SEO Optical Illusions

First, take a quick look at this code:

Did you catch the problem? The code uses “alternative” versus “alternate”. And that was on a site with 2.3M pages indexed, many of which had hreflang implemented pointing to various language pages.

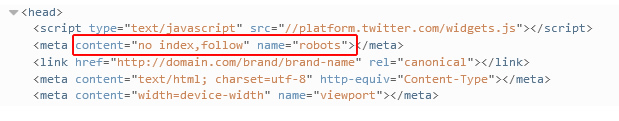

Now take a look at this code:

All looks ok, right? At first glance you might miss it, but the code uses “content” versus “href”. If rolled out to a website, it means rel canonical won’t be set up correctly for any pages using the flawed directive. And on sites where rel canonical is extremely important, like sites with urls resolving multiple ways, this can be a huge problem.

Now how about this one?

OK, so you are probably getting better at this already. The correct value should be “noindex” and not “no index”. So if you thought you were keeping those 75,000 pages out of Google’s index, you were wrong. Not a good thing to happen while Pandas and Phantoms roam the web.

I think you get the point.

How To Avoid Falling Victim To Optical Illusions?

As mentioned earlier, using an approach that leverages manual audits, site-wide crawls, and then surgical crawls (when needed) can help you nip problems in the bud. And leveraging reporting in Google Search Console (formerly Google Webmaster Tools) is obviously a smart way to proceed as well. Below, I’ll cover several things you can do to identify SEO optical illusions while auditing a site.

SEO Plugins

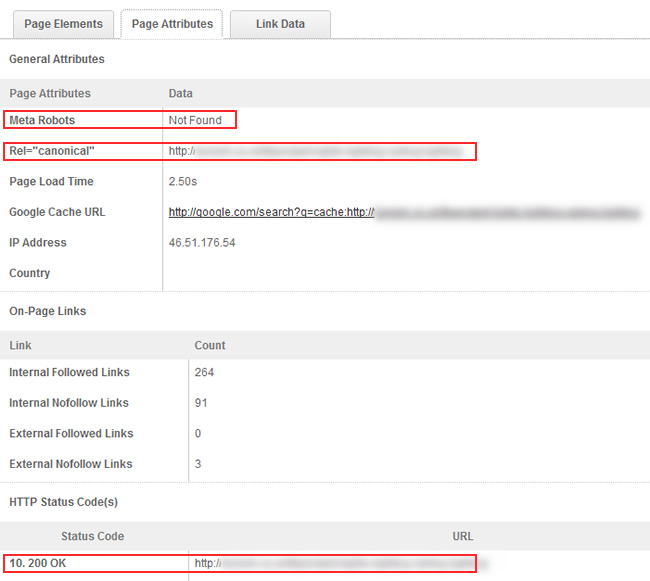

From a manual audit standpoint, using plugins like Mozbar, SEO Site Tools, and others, can help you quickly identify key elements on the page. For example, you can easily check rel canonical and the meta robots tag via both plugins.

Crawlers

From a crawl perspective, you can use DeepCrawl for larger crawls and Screaming Frog for small to medium size crawls. I often use both DeepCrawl and Screaming Frog on the same site (using “The Frog” for surgical crawls once I identify issues through manual audits or the site-wide crawl).

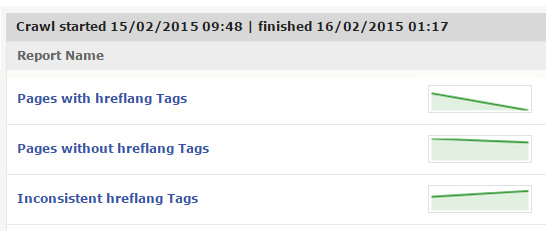

Each tool provides data about key technical SEO components like rel canonical, meta robots, rel next/prev, and hreflang. Note, DeepCrawl has built-in support for checking hreflang, while Screaming Frog requires a custom search.

Once the crawl is completed, you can double-check the technical implementation of each directive by comparing what you are seeing during the manual audit to the crawl data you have collected. It’s a great way to ensure each element is ok and won’t cause serious problems SEO-wise. And that’s especially the case on larger-scale websites that may have thousands, hundreds of thousands, or millions of pages on the site.

Google Search Console Reports

I mentioned earlier that Google Search Console reports can help identify and avoid optical illusions. Below, I’ll touch on several reports that are important from a technical SEO standpoint.

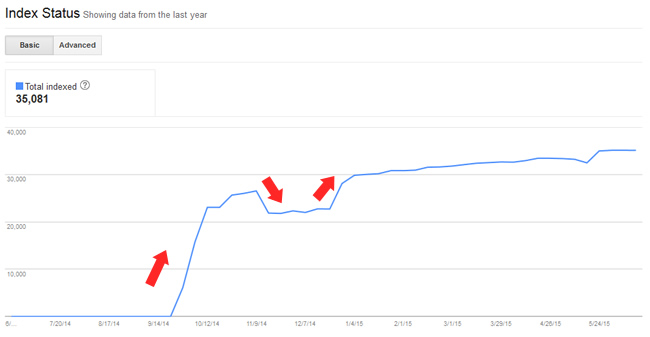

Index Status

Using index status, you can identify how many pages Google has indexed for the site at hand. And by the way, this can directory-level (which is a smart way to go). Index Status reporting will not identify specific directives or technical problems, but can help you understand if Google is over or under-indexing your site content.

For example, if you have 100,000 pages on your site, but Google has indexed just 35,000, then you probably have an issue…

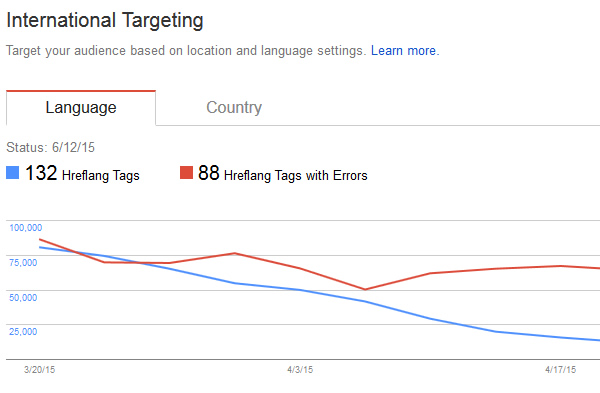

International Targeting

Using the international targeting reporting, you can troubleshoot hreflang implementations. The reporting will identify hreflang errors on specific pages of your site. Hreflang is a confusing topic for many webmasters and the reporting in GSC can get you moving in the right direction troubleshooting-wise.

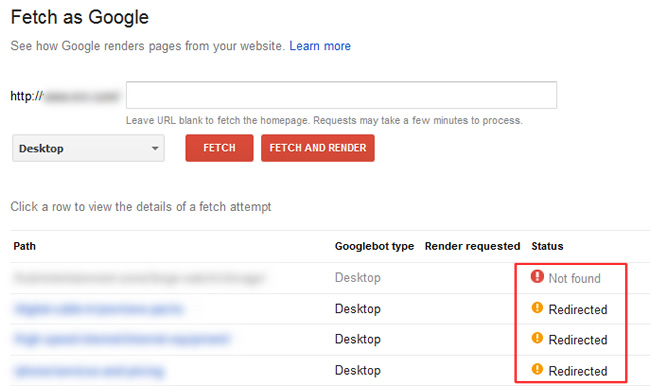

Fetch as Google

Using Fetch as Google, you can see exactly what Googlebot is crawling and the response code it is receiving. This includes viewing the meta robots tag, rel canonical tags, rel next/prev, and hreflang tags. You can also use fetch and render to see how Googlebot is rendering the page (and compare that to what users are seeing).

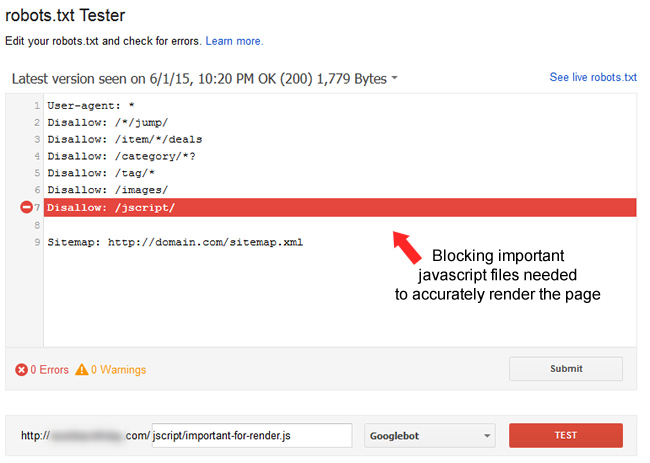

Robots.txt and Blocked Resources

Using the new robots.txt Tester in Google Search Console enables you to test the current set of robots.txt directives against your actual urls (to see what’s blocked and what’s allowed). You can also use the Tester as a sandbox to change directives and test urls. It’s a great way to identify current problems with your robots.txt file and see if future changes will cause issues.

Summary – Don’t Let Optical Illusions Trick You, and Google…

If there’s one thing you take away from this post, it’s that technical SEO problems can be easy to miss. Your eyes can absolutely play tricks on you when directives are even just a few characters off in your code. And those flawed directives can cause serious problems SEO-wise if not caught and refined.

The good news is that you can start checking your own site today. Using the techniques and reports I listed above, you can dig through your own site to ensure all is coded properly. So keep your eyes peeled, and catch those illusions before they cause any damage. Good luck.

GG