Google’s new index coverage reporting is killer. For a long time, the SEO community wanted something more powerful than the simple index status report in the old GSC. You know, something we could really sink our SEO teeth into. And we finally received that in the form of the new index coverage reporting in the GSC beta. I’m part of the GSC beta group and I remember how excited I was when I first started testing it out.

In the past with the index status report, you could only see very basic trending for indexation across a property in GSC. That was at least something, since site commands can be wildly off. But SEOs wanted to dig deeper, understand specific urls that were indexed, view problems with urls submitted in sitemaps, understand which urls Google was excluding from indexing (and why), and more. Well, that’s what we received with the new index coverage reporting. It was a powerful addition to the GSC beta.

Download Limits – The Bane of an SEO’s Existence

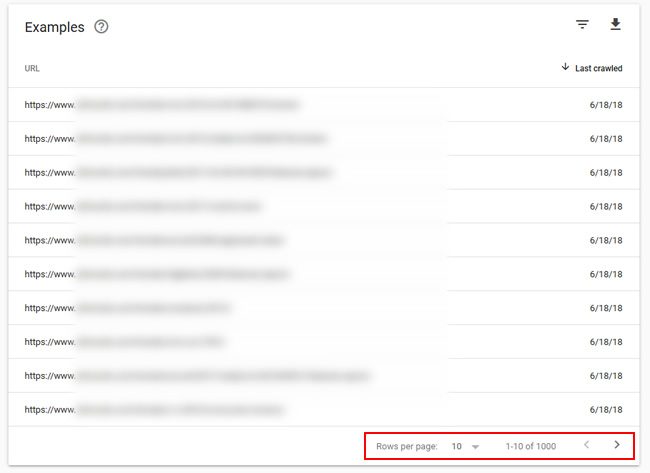

As mentioned earlier, I’m part of the GSC beta where I can help test out new functionality. I was beyond excited when testing the new index coverage report, but there were some things that needed to be improved along the way. One important item from my perspective was expanding the number of urls you can view and download from the index coverage reporting. As of now, you can only download the top one thousand urls from any given report in the index coverage tool.

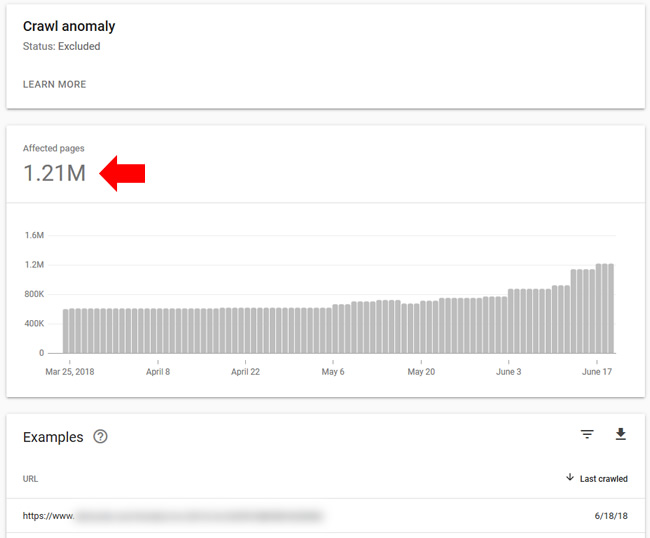

I’m often working on large-scale sites with many urls (hundreds of thousands, millions, or tens of millions of urls.) As you can imagine, limiting the export number from index coverage to one thousand urls was seriously inadequate. As a quick example, here’s the crawl anomaly report with 1.2M urls. It’s important to dig into that report, but I can only view and download the top one thousand urls. Not good… not good at all.

So how can we get more data from GSC’s index coverage reporting? Is there a way to squeeze more urls out of the tool? Yes, there is, and I’m going to cover that next.

Properties in Google Search Console (GSC)

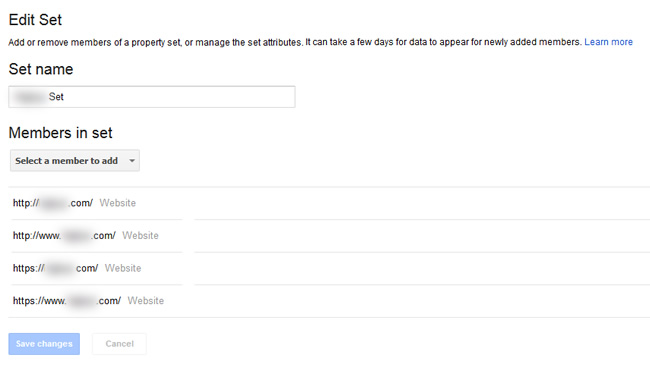

When helping new clients, it’s normal to gain access to their GSC properties as soon as I get started. But it’s all too often that I check GSC and see just one property there. For example, just the https www version of the site (or whichever is the canonical version of the site). The problem is that GSC properties are by subdomain and protocol. So, www is different than non-www, http is different than https, etc.

Therefore, at a minimum, you should have at least four properties set up in GSC for each site (http www, http non-www, https www, and https non-www). Then you can view reporting for each property and make sure you have a rounded view of your site from a GSC standpoint.

And as Forrest Gump once said, “GSC is like a box of chocolates, you never know what you’re gonna find.” OK, he never said that. But in the world of SEO, he would say that. :) There are times you might find some interesting things in non-canonical properties. Don’t let those surprise you down the line. Set them all up today. Note, you can also add each of those properties to a Property Set and view rollup reporting. I wrote about Property Sets and how to create them a few years ago and it’s smart to do.

Adding Directories As Properties in GSC: Juice Up Your Index Coverage Reporting

Four properties per site in GSC is great, but savvy SEOs have known another trick for a long time. It’s one I wrote about in an SEO galaxy far, far away (in 2014). That’s when Search Console was called Google Webmaster Tools, Matt Cutts was still at Google, medieval Panda roamed the web, and the original Penguin was busy squashing websites.

What’s the trick? Well, you can add any directory on your site as a property in GSC. And when you do, you will see reporting focused on just that directory. So, all of the reporting will only contain information for the directory you added. It’s an awesome way to get more out of GSC, including more data from any report you can export! More about that soon.

Side note about my tweet from this week:

I picked up some very interesting news this week related to GSC properties from a recent webmaster hangout video. Google’s John Mueller explained that the GSC product team is thinking about letting site owners add a root domain to GSC and then Google would verify all versions of the site in one shot. And that might include subdomains as well! This would be incredible, and the reaction from the SEO community says it all. My tweet is below, which links to the video of John explaining this:

Video from @johnmu: Whoa, Google is thinking about letting site owners add a root domain to GSC, and then Google would add *all versions* automatically (www, non-www, http, https, etc). Maybe even subdomains! That would be awesome. :) https://t.co/qTBJRHpM1m pic.twitter.com/8SmqotqALj

— Glenn Gabe (@glenngabe) June 19, 2018

Index Coverage by Directory

If you run a large-scale site, then you are probably ridiculously eager to add directories to GSC right now. But first, let me show you why it’s important for working with the new index coverage reporting. I think you’ll love this trick for getting more out of GSC (especially from a export perspective).

1. Focused reporting – Only urls from the directory you submitted

First, the various sections of the index coverage reporting will only contain urls from the directory you added. So instead of seeing millions of urls overall, you will see counts directly related to the directory you added as a property.

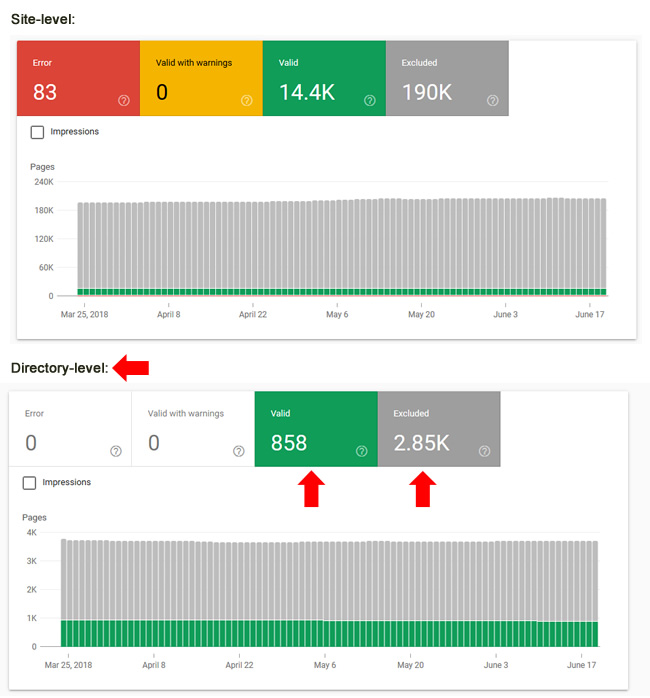

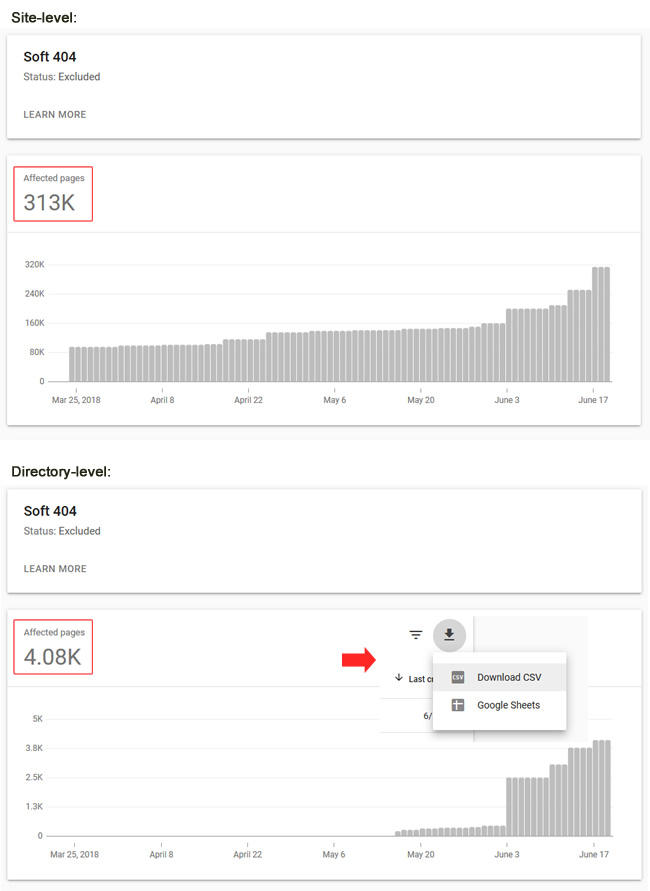

This can help you surface, analyze, and then fix specific problems from any given directory quicker than looking at the site overall. For example, errors, warnings, and excluded urls will only be from the directory at hand. Here’s a quick example showing the difference in urls for the site versus a specific directory:

2. Trending by directory (with notifications)

The trending you view for each report will also be focused on the directory versus the entire site. That can provide a great visual for how specific issues are trending over time (but just for the folder you are analyzing). You will see a rolling 90-day graph for each report.

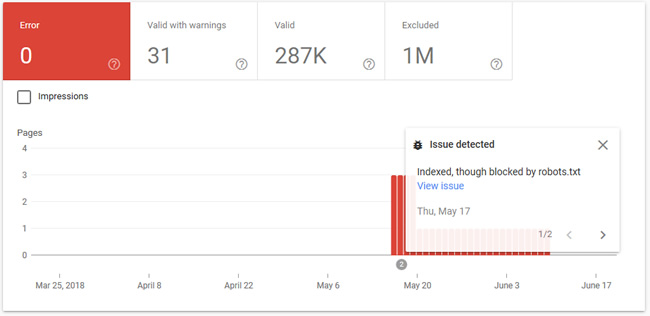

And the index coverage reporting also provides notifications based on specific issues showing up. The notifications will also be focused on the directory at hand. For example, here’s a notification about urls being indexed, but blocked by robots.txt. The urls affected (and listed in the report) are only located in the directory I am analyzing. Awesome.

3. Indexation by directory

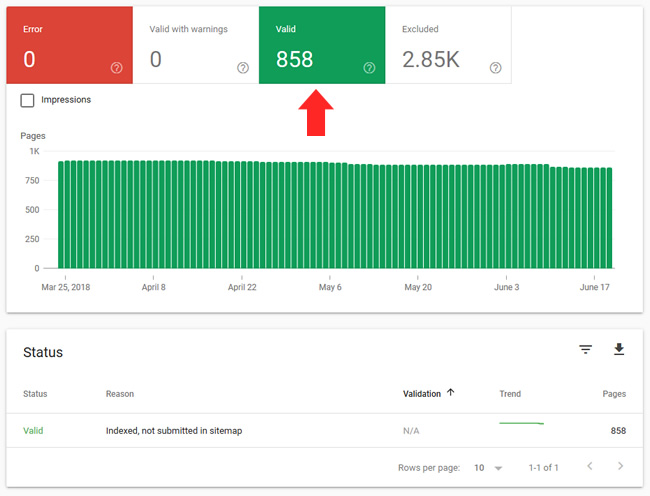

One of the great things about the new index coverage reporting is that you can finally see the urls that Google has indexed (well, at the least the top one thousand). That information is contained in the Valid category in your reporting. But now when you add a directory, you can see indexation by directory. And you can export the urls as well to get a closer look at specific pages that are valid and have been indexed. Here is an example of a directory with 858 urls indexed versus viewing many more for the entire site. And this list can be fully downloaded, since it’s under 1K urls.

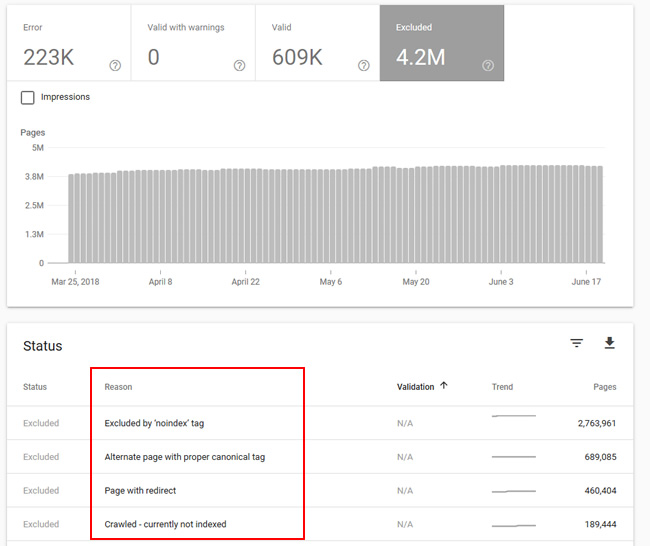

4. Excluded by directory

One of the most important additions with the index coverage report was the Excluded category. This is where Google will provide urls that it’s choosing to exclude from indexing. It’s broken down by category, and you can often find glaring issues by analyzing the various reports in this section.

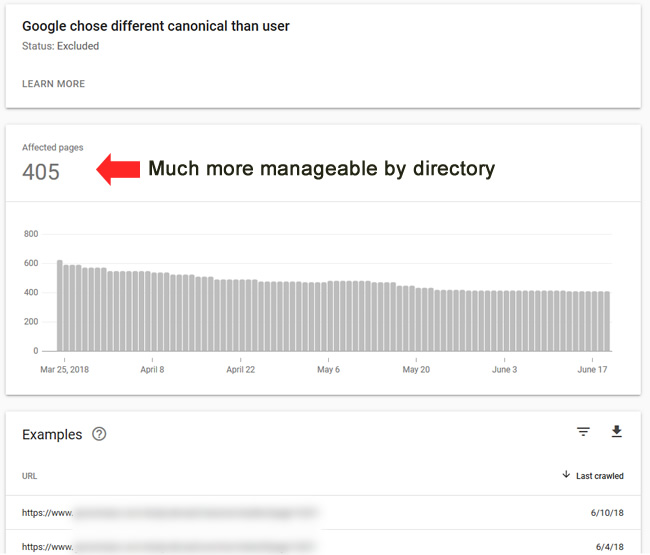

And now when you add directories, you can view excluded urls by directory! Again, it’s a focused set of reports versus lumping together urls from across the entire site. For example, one of my favorite reports is “Google chose a different canonical than user”. That’s where you can view urls that contain a canonical tag, but Google chose to ignore that tag and index a different url. As you can imagine, it’s important to understand what’s gong on there. And that’s especially the case if you see thousands of urls in the report (or more). Below, the directory has 405 urls versus many more at the site-level. Again, this helps greatly when analyzing a problematic situation.

5. Downloads by directory

This is one of the most important benefits you receive by adding directories in GSC. The index coverage reporting enables you to download any report, but it’s limited to the top one thousand urls per report. For smaller sites, that number might suffice, but for larger-scale sites, it’s typically very limiting.

But when you add directories, the reporting will not contain urls from across the entire site. It will only contain urls for the directory you are analyzing. Therefore, you can export more urls for the directory at hand. That includes urls that are indexed, as well as, urls that are being excluded from indexing. It’s a more surgical way to export urls.

For example, when checking the https www property for this site, you can see 312K soft 404s. But when checking just the directory I added, you can see 4K. So, when you export the top one thousand from the directory property, you’ll be getting more data specifically for that folder (1/4 of the total). That’s versus trying to export one thousand out of 312K. And then you can do this for other important directories to see where the core problems are per directory.

Summary – Get all of the data you can by adding directories in GSC

The new index coverage reporting in GSC is powerful, and it was a huge improvement from the index status report in the old GSC. That said, the reporting will only list the top one thousand urls per category, which can be very limiting for larger-scale sites. But if you add directories, you can focus the reporting on just that folder (versus viewing urls from across the entire site). And when you do, your downloads will be more focused as well (which can get you even more data for the problem at hand). So go ahead and add your key directories today. Data awaits. :)

GG