As I’ve been analyzing the impact from the August broad core update (I’ll have more to share on that soon…), I’ve been digging into drops and surges across sites. For larger-scale sites, I often come across pockets of content that I want to take a closer look at from a quality standpoint. And as part of that analysis, I often want to cross-reference GSC data to better understand if Google is surfacing that content in the SERPs, how many clicks that content is receiving, how that looks across mobile and desktop, and more.

For example, maybe there is a large group of articles you want to explore in greater detail, a number of product pages you want to analyze, or a group of reviews from across a site. And maybe there are hundreds, or even thousands, of those urls that you want to pull GSC data for. For situations like that, you don’t want to simply export ALL data for a GSC property and dig through it. You just want GSC data for the group of urls you are analyzing.

I have already written several articles about bulk exporting GSC data using Analytics Edge, including how to automate delta reports, but I never covered how to export data for a specific set of urls. It was just for exporting that data by property. That’s why I came up with a solution for exporting data just for the urls I want to check. And beyond that, the system I created can export data across Google surfaces like images, video, news, Discover, etc. This process can be very helpful after major algorithm updates roll out like broad core updates, helpful content updates, and reviews updates.

What we are going to achieve via the GSC API and Analytics Edge:

First, we’ll create a list of urls that we want to export GSC data for. That list can contain as many urls as you want (dozens, hundreds, or even thousands). Then we’ll use Analytics Edge to bulk export performance data via the GSC API and then use the worksheet of urls as a lookup table. In other words, we’ll match the exported data with the worksheet we create containing urls we want to check, and then export the data that matches those pages.

And later in this post, I’ll quickly explain how you can expand this system to string multiple macros together to check several Google surfaces in one shot (news, Discover, search, images, etc.)

Step by step: How to bulk export GSC data for a specific set of URLs:

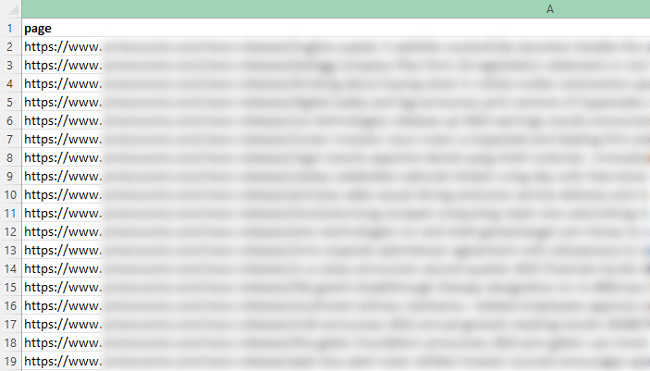

1. Gather your list of urls:

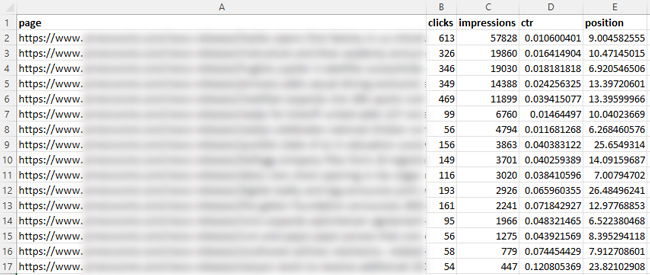

First, create a worksheet of urls you want to export GSC data for. This can be based on an audit you are conducting, a recent crawl you completed, an xml sitemap you have, etc. Basically, you are looking to view clicks, impressions, click through rate, and position for a set of urls. Name the worksheet “Pages” and you can name the column “page”.

2. Export landing pages via the GSC API for a property:

The next step is to export all landing page data for the GSC property you want to analyze. Note, you will not be filtering data at this stage, so just export all of the data via the API. We’ll filter the data in the next step based on the worksheet you created. You can view my other tutorial for exporting landing page data via Analytics Edge or you can view the abbreviated steps below. It’s very easy to do.

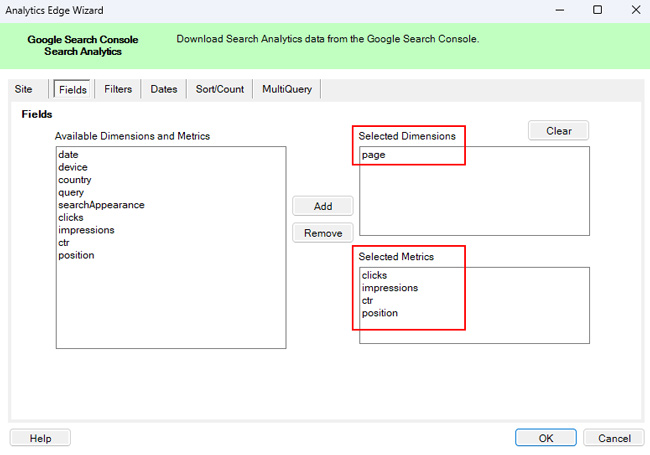

3. Export landing page data via the GSC API and Analytics Edge:

When exporting the data, select “page” as the dimension and make sure the selected metrics include clicks, impressions, ctr, and position.

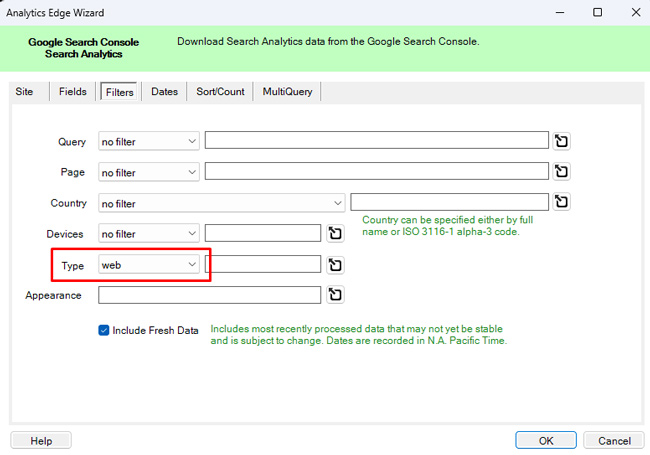

4. Export Web Search data:

In the filters tab, select “web” under Type (for Web Search data). I’ll explain more about exporting other types of data later in the tutorial.

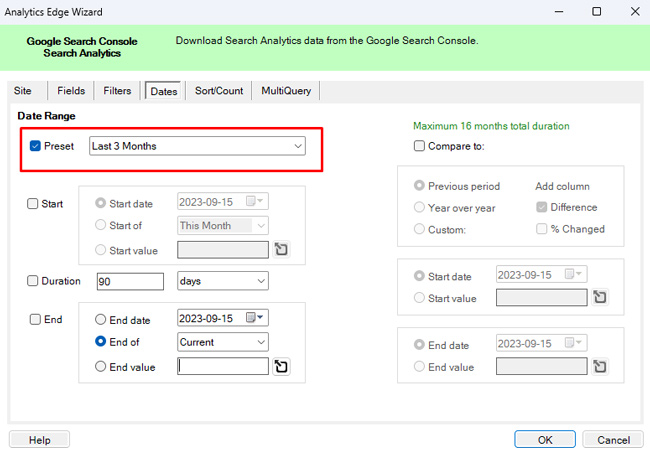

5. Select a timeframe:

For the dates tab, select the timeframe you want to check. You can choose a preselected timeframe or set a custom timeframe. For this tutorial, select “Last 3 Months”.

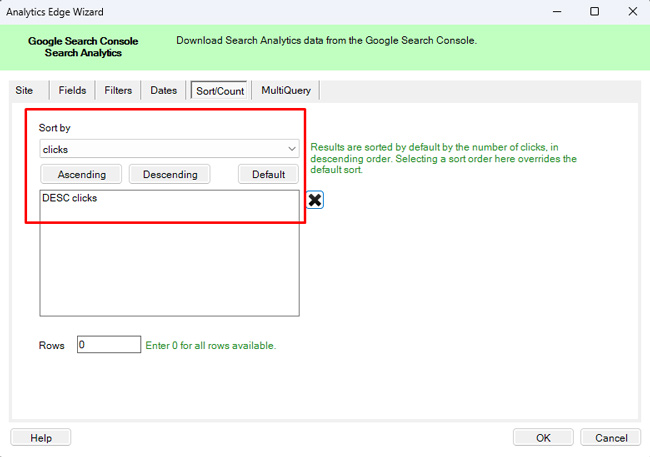

6. Sort by clicks or impressions:

In the Sort/Count tab, use the dropdown to select “Clicks” and the click the button for “Descending”. This will sort the exported data by pages with the most clicks first. You can also sort by impressions if you are just looking to see if the pages ranked in the SERPs. Either way, you can easily sort the export via Analytics Edge.

7. Export the data:

Click “OK” in the bottom of the Analytics Edge modal window to export the data via the GSC API.

8. It’s lookup table time! Filter based on the Pages worksheet:

Before we write this data to a worksheet, we want to filter the export to only provide data for the urls we listed in our Pages worksheet. Remember, we don’t want all the data, we just want to analyze the data for the pages we included in that worksheet.

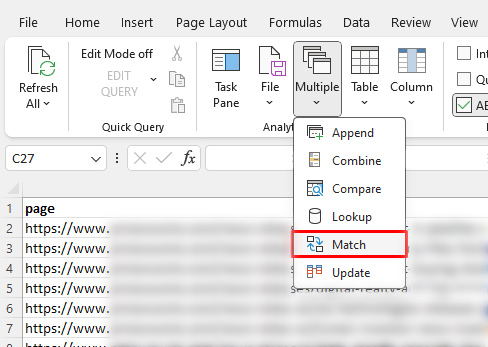

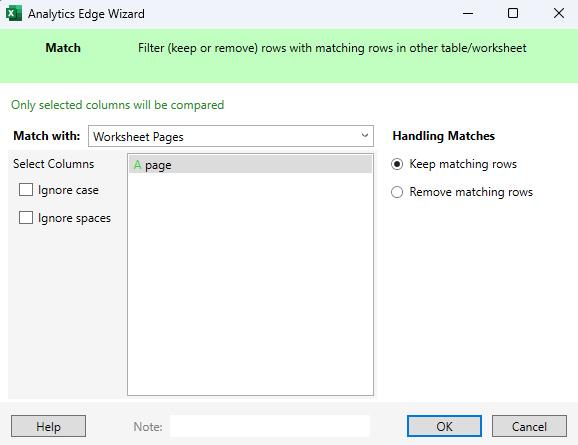

9. Use the Match function to check the Pages worksheet:

By using the Match function, we can use the Pages worksheet as a lookup table and only write the data for those urls to a new worksheet. Click the dropdown in the Analytics Edge menu labeled “Multiple” and click “Match”. Then use the “Match with” dropdown to select “Worksheet Pages” and then keep the selected column as “A page”. And make sure the radio button for “Handling Matches” is set to “Keep matching rows”. Click “OK” to execute the match.

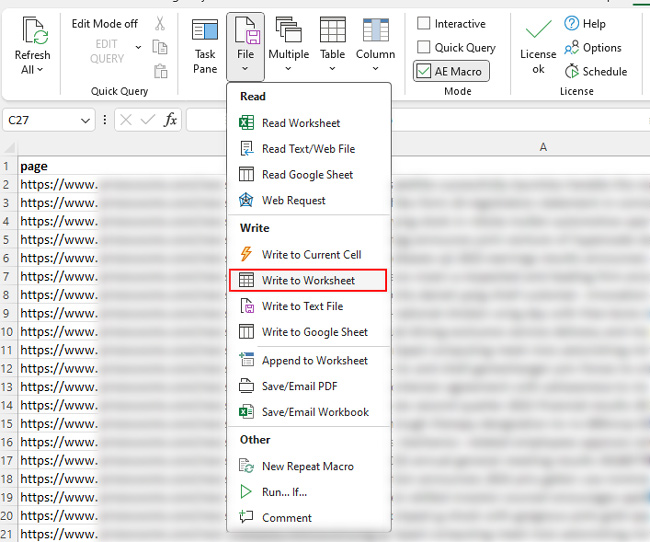

10. Write to worksheet:

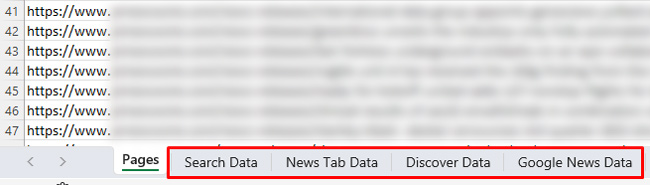

The final step is to write the filtered data to a worksheet. Click the dropdown labeled “File” in the Analytics Edge menu and click “Write to Worksheet”. In the “Worksheet field”, enter a name for the worksheet that will contain the filtered data. You can name it “Search Data” for this tutorial. Then click “OK”.

Congratulations, you have successfully exported GSC data for a specific list of urls! If you are ever looking for a quick way to view GSC data for a set group of urls (no matter the size), you now have a template for accomplishing that task. Again, Analytics Edge is like a Swiss Army Knife for working with APIs.

Bonus: It’s Analytics Edge, Be Creative, Go Nuts:

In previous tutorials, I explained how to string multiple macros together to automate even more actions. Well, for this tutorial you could easily create new macros for exporting more data from across Google surfaces like Discover, images, the news tab in Search, Google News, etc. Once you set up multiple macros, you will have a system ready for exporting data by Google surface for a specific set of urls (and by clicking a single button in Excel).

Summary – Exporting data for a set of urls via Analytics Edge.

There are times you want to quickly understand how certain urls are performing across a site (like after major algorithm updates) without having to sift through all of the data from that site. By using the approach I mapped out in this tutorial, you can leverage Analytics Edge and the GSC API to do just that (and across Google surfaces). It shouldn’t take long to set up, and you’ll always have that template for future projects. I think you’ll dig it. :)

GG