Wednesday was a relatively normal day for me in Google Land. I was auditing client sites, still digging into the March 2026 core update movement, and posting the latest SEO and AI Search news across social media. But then an interesting email arrived. It was from a company I helped last year and the subject line said it all…

“We are not in Google’s Index!”

Well, that caught my attention… It’s a company in a YMYL niche that has been impacted by several major updates in the past but has worked hard to improve over time. Also, they have been doing quite well Google-wise over the past six months while they continue to work on improving quality across the site (content, UX, technical SEO, etc.) The site has a footprint of about 20K urls, so it’s not a huge site, but it’s not small either.

I can’t go into too much detail about their focus or content, but there is a programmatic aspect to the site. In addition, I covered an important topic with them during the audit last year, which was AI-generated content. There are pockets of AI-generated content across a number of pages on the site and it definitely concerned me. And if you follow me on social media or read my blog, you know I am not a fan of sites publishing 100% AI-generated content (and especially for YMYL content). I coined the term “Mt. AI“, and for good reason.

To clarify, the entire pages aren’t AI-generated, but some sections were. The AI content isn’t spammy or egregious, nor was it there to game Google’s algorithms, but there was quite a bit of it when I audited the site last year. After the audit, they have been working to address that situation by publishing more human content, but it’s taking time to address across the entire site. And again, there was always human content, but just sections of AI-generated content mixed in.

Back to the email… As you can guess, with an emergency email arriving about being deindexed, my first thought went straight to AI and programmatic content. But as with any good mystery, things may not be what they seem in the early stages of the story.

In this post, I’ll cover what took place, how I troubleshooted the situation, what my client found, and how the situation ended up. Also, I wanted to thank my client for letting me publish this case study. I think it could help other site owners that might find themselves in a similar situation. It was an interesting one, that’s for sure.

Let’s get started.

The Email – HELP, we’ve been completely deindexed!

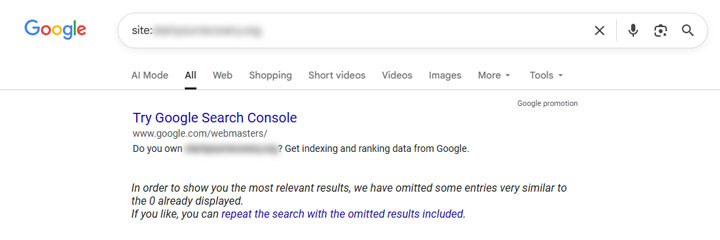

After receiving the email, I immediately ran a quick site query. Yep, the site was completely deindexed. That’s a big hammer for Google so something was clearly not right. They were nuked from the SERPs. Poof, gone.

Next, I jumped into GSC to check for manual actions or security issues. There was not a manual action yet and the security viewer was clear as well. Note, that does not mean the site didn’t have a manual action. Manual actions can be delayed and I explained that to my client. And without a manual action, you must put your SEO detective hat on and start investigating the situation. That’s where things got interesting.

Based on what I explained before, my first thought was the AI-generated content since it was across a number of pages on the site. It wasn’t the full content of the page but there was a lot of it in sections within pages. So was this a manual action for “Scaled content abuse” or was there something else at play?

GSC to the rescue and the importance of adding ALL properties for your site:

I immediately dug into GSC to see what I could find. There were properties set up for the core url-prefix version of the site (https non-www property), a domain property, and then some directory properties. But one important property was missing and it’s one I always recommend setting up. That’s the https www property even if a site isn’t using that version. I’ll come back to this important finding soon.

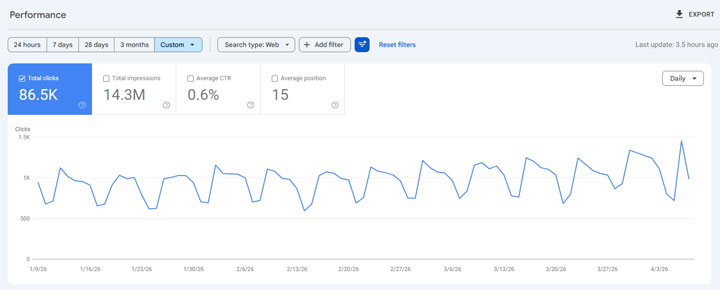

When digging in, the core url-prefix property for the site (https non-www) looked totally normal in the performance reporting. Clicks and impressions were chugging along as usual with a decent increase based on the March 2026 core update. Again, they have been working hard to improve over time.

But then I started digging into the other properties. And it didn’t take long to find something that looked very off.

A huge spike in impressions and traffic, and just for one page:

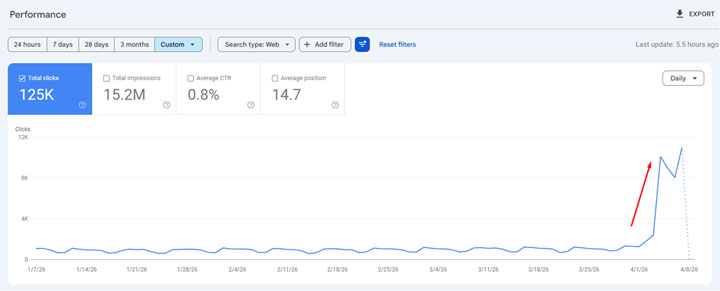

When checking the domain property, which covers all protocols and subdomains, I noticed a huge spike in impressions and clicks on one specific day. Filtering by page revealed it was the homepage of the non-canonical version of the site (https www). It oddly spiked like crazy on one day. The site jumped to nearly 12K clicks per day out of the blue…

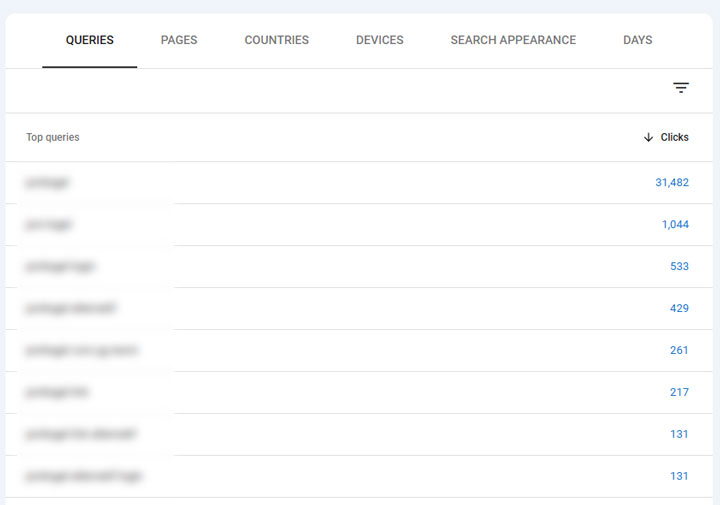

And checking the queries that page ranked for yielded a critical problem. All of the queries were gambling related. Yep, the site was hacked and it was just the www version of the homepage that was hacked, which was redirecting to some gambling site. And since my client was just checking the canonical version of the site (https non-www), they didn’t even realize this happened. The domain property revealed the problem but they weren’t checking that property. Also, having the https www version set up would have revealed the issue as well (but that wasn’t even set up)…

Here are the gambling queries when you isolated the www homepage:

The visibility tools picked this up as well. And you could see the various SERP screenshots that were captured during the hack showing the www homepage with a new favicon, title, and snippet. And again, when you clicked through, the hacked page redirected users downstream to a gambling site. Remember, this was a site covering a YMYL topic… Oof.

I immediately emailed my client explaining they were hacked and included all of the screenshots. They moved quickly to clean up the problem and to close the security hole. I’ll cover what that security vulnerability was soon, but they cleaned everything up very quickly (within an hour or so). But, they were still deindexed and were confused about next steps.

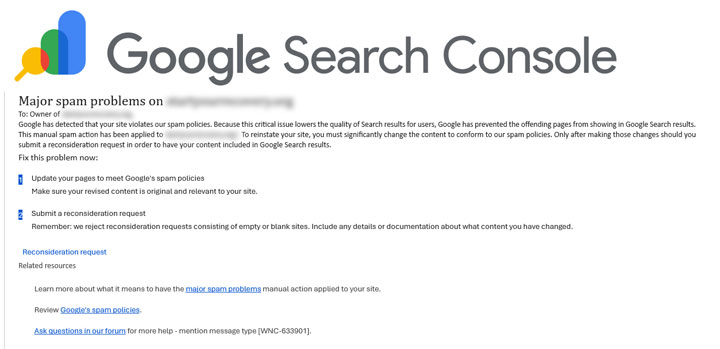

The delayed manual action finally shows up:

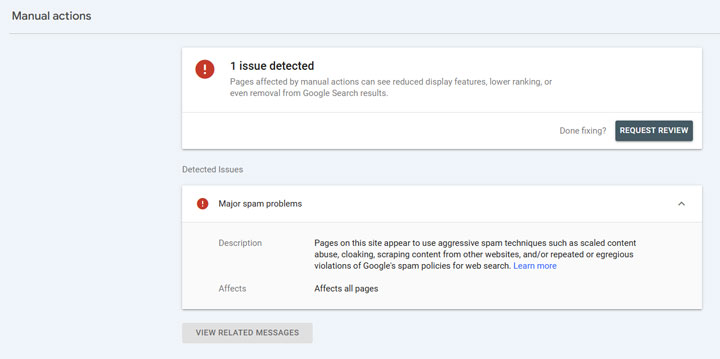

I told my client when they first emailed me that a manual action was probably on its way… and I was right. The next morning (3AM ET to be specific), the manual action arrived. And it was for “Major spam problems” impacting the entire site. Interesting that Google used “Major spam problems” and that it impacted the entire site since it was only the homepage of the www subdomain, but regardless, the entire site was indeed impacted.

My client immediately filed a reconsideration request explaining they were hacked, that they cleaned up the situation, etc. Now they just needed to wait for Google to review the request. I was hoping that would happen quickly, but you never know…

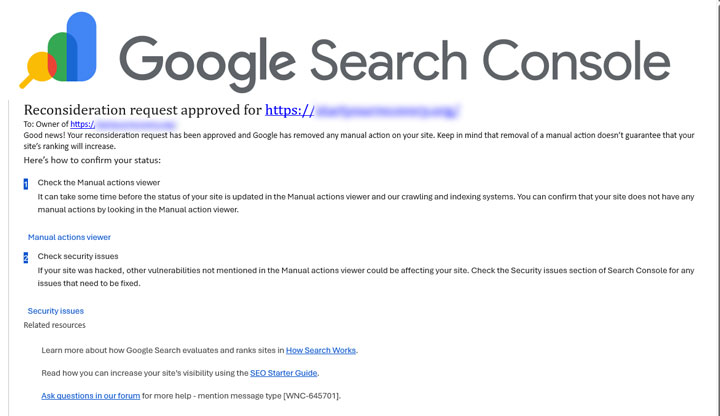

But quick it was! The next morning I noticed all of the pages were showing back up in the SERPs via site query, even though my client hadn’t received a message back from Google yet about the reconsideration request. I notified my client immediately and they were thrilled to see the site back ranking where it should be. And then the message from Google finally showed up a few hours later in GSC and via email. The reconsideration request was approved and the manual action lifted.

The Security Hole – DNS:

Once I sent the information through about the www version of the homepage getting hacked, the technical lead for my client moved quickly to rectify the situation. He also explained how this happened via email. I’ll provide the details below. I hope this can help some site owners out there that might have a similar set up. i.e. the following bullets could help you avoid opening a security hole, or if you get hacked, how to close the security hole quickly!

Here’s the rundown (directly from my client):

- Nothing on our site uses www and we had redirect rules driving all requests to non www. And all of our (site) monitoring was pointed at non www version of our site. That was a mistake.

- Our www DNS entry pointed to an old Azure web app that helped with the redirects (among other things).

- We forgot about this www entry and decommissioned the old web app. As soon as Azure released the web app name (and corresponding DNS entry), someone else grabbed it and pointed it at their spam site. I’m kind of amazed Azure allowed something so sketchy to run on their platform but this is undoubtedly harder to monitor than you’d think. So no warnings or flags were triggered and we didn’t even think to look at www.

- It was easy to go fix the www DNS entry but then it takes a bit of time to have DNS propagate and then Google to see that the problem had been rectified.

So if your site is resolving as non-www, then make sure you know how www is being handled. Don’t just monitor the non-www version. Make sure you monitor www as well. If not, someone could eventually hack it, take control, redirect users to any site they want (including spammy or dangerous sites). And then you could get nuked from the SERPs like this company did.

And the manual action lagged a big so there was about a day of stress while figuring this all out. Then they filed a reconsideration request and the manual action was lifted the following day. I’ll end with some final bullets for site owners based on this situation.

Avoiding Disaster: Key points for site owners.

- Make sure to set up ALL versions of your site in GSC as properties. That includes a domain property, https www, https non-www, http www, http, non-www, important directories, etc.

- Monitor all versions of your site from a security and performance perspective. If your site only resolves at www, or non-www, make sure you are still monitoring the other version.

- Ensure DNS is handled properly for your site. As this case demonstrated, what seems like one minor change yielded an opportunity for hackers to take control of the www version of the homepage. Then all hell broke loose.

- Manual actions can take time to show up in Search Console even when the site is already being impacted in the search results. So there’s a lag that can be confusing for site owners. Just understand this can happen as you dig into the situation. Also, don’t make any rash decisions until you know what the manual action is for (and that you definitely have a manual action).

- When investigating the problem, dig into each GSC property and look for strange surges or drops. In this situation, the https www version of the homepage surged like crazy. Isolating that page and viewing the queries yielded the problem. Slice and dice your GSC reporting. You can often find the culprit.

- Although AI-generated content wasn’t the cause of this manual action, you should still be very careful with how you utilize AI content, and scale it. Do not just scale 100% AI-generated content and think that will be ok over the long term. I have covered what I call “Mt. AI” many times across social media. Beware.

Summary – A DNS vulnerability led to a manual action and a site completely deindexed.

That was a pretty stressful few days for my client and I appreciate them letting me write this post. Again, I hope it can help some site owners out there that might run into the same type of vulnerability with their own sites. This case demonstrates how one page getting hacked led to an entire site getting flagged as spam. Then they were deindexed and removed from the search results.

And to add insult to injury, manual actions can often show up down the line AFTER the site is impacted in the search results. So make sure you stay on top of site security. Then you can hopefully avoid a situation like this. The good news is that my client is back in the index, the manual action is removed, they are ranking where they were, and their business is humming again. Oh, and you better believe they are now monitoring the www version of the site. :)

GG