Update: April 2022

I just published a post explaining how sites can use NewsGuard’s nutritional labels to avoid manual actions for violating Google’s medical policy (for News and Discover). This is based on helping sites that received manual actions in January of 2022.

—–

Based partly on the August 1 Google algorithm update, there’s been an extreme focus from site owners and SEOs on building and demonstrating E-A-T (expertise, authoritativeness, and trust). Now, the concept of E-A-T has been documented in Google’s quality rater guidelines (QRG) for years, but the recent update did seem to have an element that turned up the volume from an E-A-T perspective (and especially for sites focused on health and medical.) That’s why Barry Schwartz decided to name it the Medic Update.

Keep in mind that many different types of sites were impacted by the August 1 update and Google even confirmed they didn’t target sites that could be categorized as Your Money or Your Life (YMYL). Also, it’s important to know that you can’t build E-A-T quickly. I’ve mentioned that in my previous posts about major algorithm updates. You can, however, build strong E-A-T over time if you are doing the right things (building great content written by experts in your niche, using Social to reach a targeted audience, naturally building links from well-known sources, etc.)

Regarding the Quality Rater Guidelines (QRG), Google’s quality raters are asked to evaluate its algorithms, and E-A-T comes up many times in the 160-page guide. Note, raters cannot directly impact the rankings of any specific site, but their feedback is sent to the engineers, who then refine their algorithms. Therefore, they sure can impact rankings indirectly.

Based on what I explained above, site owners tend to have a few important questions:

- Is my site and content trusted?

- Are my writers perceived as experts in a niche?

- Which specific factors on my site would cause users (and Google) to not trust the site?

- Is there any way to see ratings for my site?

When helping companies that have been significantly impacted by a major Google algorithm update, it’s important to review the site overall, identify all problems that could be causing issues, and form a remediation plan for fixing those problems as quickly as possible. And helping companies determine perceived E-A-T is important. For example, do you have expert authors, are you deceiving users, does your content exhibit a high level of authoritativeness, etc.

The topic is extremely nuanced, since each niche category is different. For example, a medical site is different from a financial site, which is different from a dating website. And those sites are much different than coupon sites or lyrics websites. Again, there are many different types of sites on the web. And to address the last question from above about actually seeing ratings, there’s no way to see what raters thought of your site while evaluating Google’s algorithms.

That’s unless you’re a news publisher… Did I get your attention? :)

Well, you can’t see what Google’s quality raters think, but you can see view what Newsguard’s team of analysts think. And that can be a good proxy for understanding issues your site has regarding trust, credibility, and transparency.

Newsguard – Publicly evaluating trust and transparency of news publishers

Based on what I explained above, it’s great when you can get objective third parties to review a site (almost like your own version of Google’s quality raters). You can do that on your own, but there’s a good amount of work to do in order to run effective user studies. That’s why it’s great when you can find public sources of information from other services that can yield strong feedback about your site. That’s exactly what Newsguard is doing (for news publishers).

On its site, Newsguard explains that it aims to fight fake news, misinformation, and disinformation. I’ll explain more about Newsguard in the next section, but think of it as a way for the average person to quickly identify organizations that are adhering to important journalistic standards, and not participating in misleading the public.

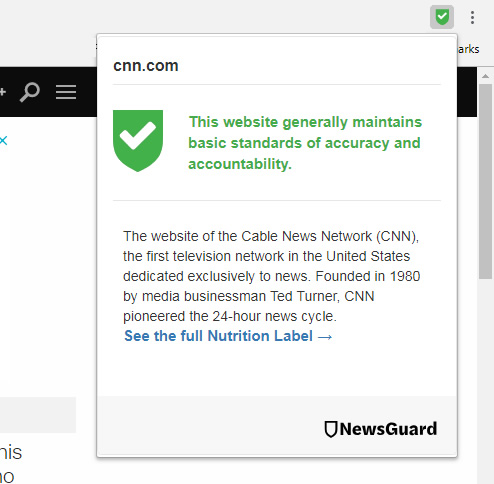

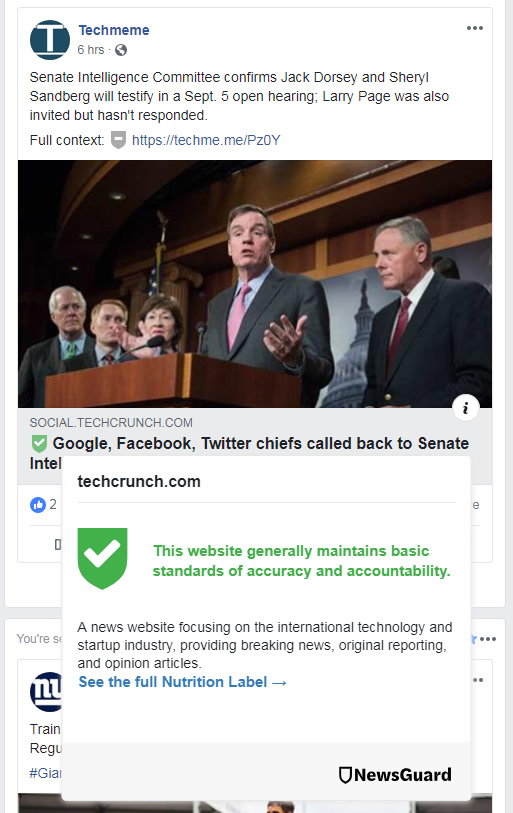

Newsguard has a Chrome plugin that you can install that provides a quick way to see ratings for news publishes across Search and Social Media. You can also see ratings when visiting the sites in question. As soon as I read an article about Newsguard, I had to check it out. And after using it, I found it fascinating to receive objective feedback about the trustworthiness of various news organizations. It had me wishing there was a Newsguard for every category of website.

How It Works – Criteria and Nutrition Label

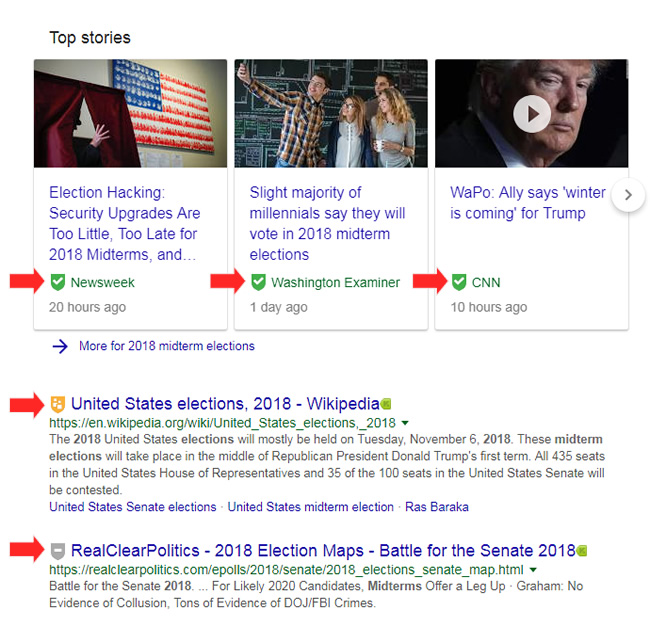

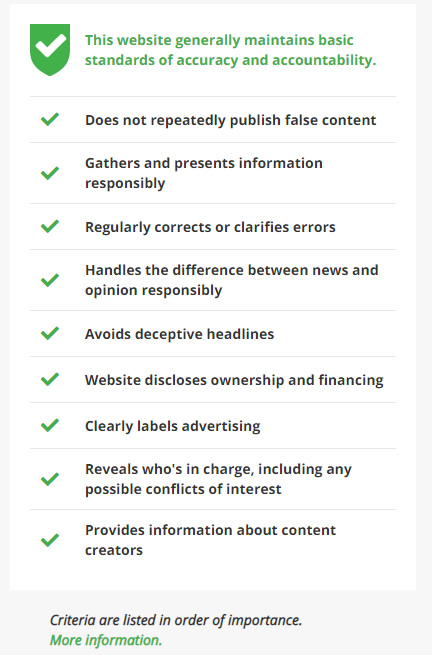

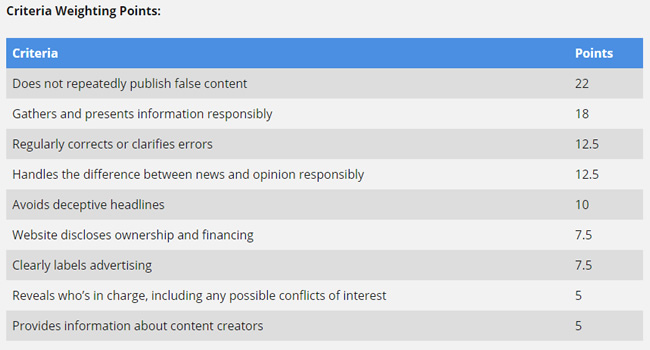

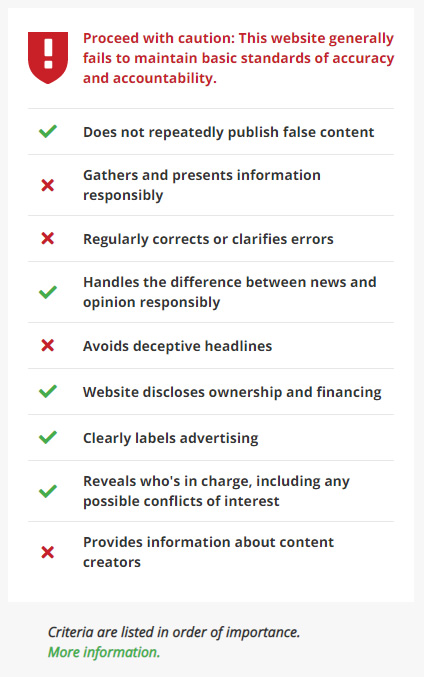

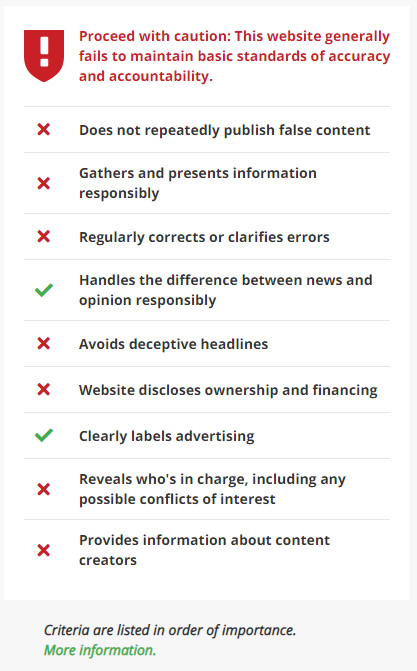

Newsguard has analysts that are trained journalists objectively rate news publishers based on nine criteria broken down into two major categories, credibility and transparency. Each criterion is weighted and worth a certain number of points. If a site receives 60 points or higher, it gets a green label (which is displayed in the browser, in the Search results, and across Social Media sites). If it falls below 60, it gets a red label. There are also other labels for user-generated content, satire and humor sites, etc.

For example, here’s what the plugin looks like in the browser window:

And here’s what it looks like in the Search results:

And here’s what it looks like across Social Media sites:

And you don’t just receive basic feedback (like the score). You can see a full “nutrition label” to learn more about why the site received that score, what the analysts listed as reasons for the scoring, etc. Newsguard explains they are completely transparent about why a site received a certain score. They also reach out to the sites being reviewed for more information and will publish that too. And if a score needs to be adjusted based on new information, Newsguard will document that information as well. You can read more about their corrections policy on the site.

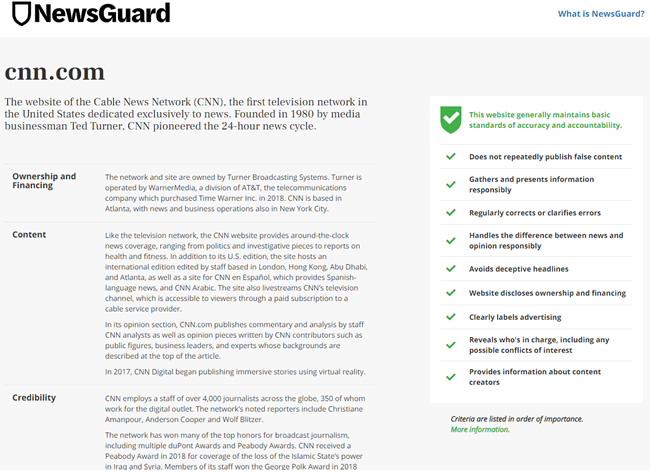

For example, here’s an example of a nutrition label for CNN:

And you can read the full rating with information from analysts:

9 Factors Evaluated By Analysts

You can read more about the criteria on the Newsguard website, but they look at a number of factors that are important for any news publisher (and it’s important to note that there’s overlap with certain parts of Google’s quality rater guidelines).

For example, from a credibility standpoint, does the site:

- Publish false content.

- Gather and present information responsibly.

- Correct or clarify errors.

- Handle the difference between news and opinion responsibly.

- Avoid deceptive headlines.

And from a transparency standpoint, does the site:

- Disclose ownership and financing.

- Clearly label advertising.

- Reveal who is in charge, including conflicts of interest.

- Provide information about content creators.

Again, each factor is scored and weighted, with 60 points being the difference between a green label and red label. You can see the weighting below:

And for those of your reading this post involved in SEO, your QRG antennae might have gone up several times when reading those bullets points. For example, they mention deceptive advertising, bios for content creators, deceptive headlines, and more. Again, wouldn’t it be great to have this objective feedback as a starting point for every site on the web?

Examples of Site Ratings:

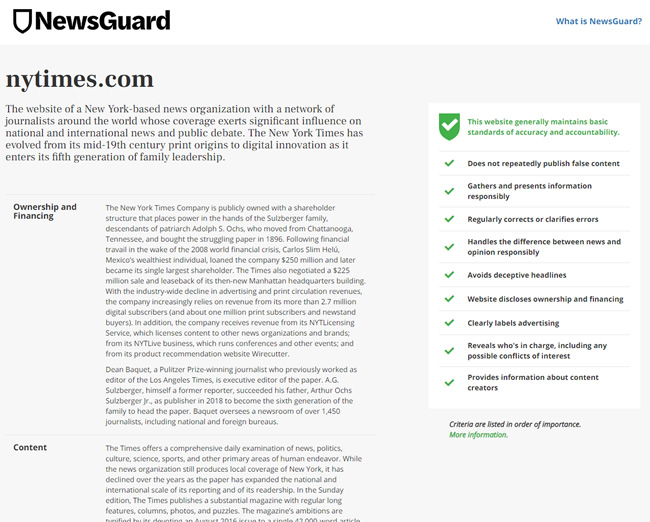

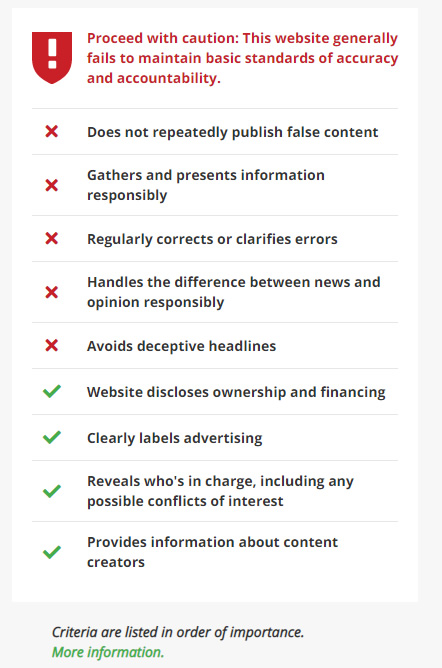

Below, I’ll provide a few examples so you can quickly see two sites on opposite ends of the spectrum. One receives very strong ratings, while the other sits below the 60-point threshold.

The New York Times (view the page on Newsguard.com):

A news publisher with 6 major categories of content, including YMYL topics, that received a red badge (less than 60 points):

Not listed? Submit your site.

As you can guess, the major limitation here is that this is a very manual process for Newsguard. That’s why I came across a number of news publishers that were in the process of being reviewed, or not even in the system yet. I don’t know how long it takes analysts to review each site, and then how long it takes for editors to review those ratings, and then for the co-CEOs to make a final review, but the process doesn’t seem quick. I’m not saying it should be quick, but I can see them getting swamped with requests very quickly. You can read more about the review process on the site.

So if you don’t see a rating for your news site, then feel free to submit it to Newsguard. I submitted a few dozen during my testing. It should be interesting to see how long it takes for those submissions to be reviewed. I’ll share more on Twitter when I see some of those submissions finally receive scores.

Here’s an example of a site in the process of being reviewed:

A Note About Google’s Major Core Algorithm Updates – How do sites with failing grades fare during major updates?

While testing Newsguard across the web to check news publishers, I kept wondering how good of a proxy it was for Google’s quality rater guidelines (and how that would manifest itself during major algorithms updates).

For example, would failing sites also see negative movement during broad core updates? Let’s face it, publishing false information, providing deceptive advertising, using deceptive headlines, and more certainly are things that could cause algorithmic problems. But remember, Newsguard is only tackling a piece of the quality rater guidelines (and clearly not looking at everything Google is looking at). There are 160 pages in the guidelines and Newsguard focuses heavily on trust, credibility, and transparency.

But I was still interested in seeing the correlation. I’m not saying a failing Newsguard grade would mean you are going to tank during a major Google update, but it sure can provide some important feedback from Newsguard analysts about how your site is perceived. I plan to dig in more to see the connection between low ratings and algorithmic hits, but for now I just checked some news publishers with failing scores. I provided two examples below.

This news site failed and had previously been hammered by multiple major algorithm updates over the past two years. But, they did work hard on fixing many issues on the site recently and ended up surging during the August 1, 2018 update. So the ratings were before that happened. I’m recommending that the site contact Newsguard to provide additional information about their recent changes (and to see if they can get a fresh review).

And here’s a site that got hammered by the May 2017 update and has never come back. I haven’t analyzed this site heavily, but you can quickly see many serious issues when visiting the site. It’s pretty clear there are quality issues, UX barriers, and aggressive advertising problems throughout the site. A failing rating is justified here in my opinion, and they did get hammered by a major algorithm update.

Summary – Free quality rater feedback from Newsguard

With many marketers now heavily interested in Google’s quality rater guidelines and E-A-T, I found it interesting to learn more about Newsguard, the criteria they use to rate sites, and to see how various news publishers fared with their scoring system.

At a minimum, news publishers should review their scores and look to improve, where possible. Again, it’s like seeing what Google’s quality raters think of a site without having access to the real quality raters. I believe having a public resource like this can be extremely valuable for site owners, journalists, and SEOs. I just wish there was a Newsguard for all sites. Hey, maybe there will be some day.

GG