Updated on 7/29/23: OpenAI shut down its AI content detection tool on July 24, 2023 based on low accuracy. The tool now 404s so I removed links to the tool from this post.

Updated on 2/1/23: OpenAI’s AI content detection tool (AI Text Classifier) was added to the list. GPTZeroX was also included (an upgrade to GPTZero).

Updated on 1/10/23: GPTZero was added to the list of AI content detection tools (created by a Princeton University senior).

Updated on 12/29/22: Content at Scale’s AI content detection tool was added.

Updated on 12/14/22: Writer’s AI content detection tool has been updated to detect GPT-3, GPT 3.5, and ChatGPT.

Updated on 12/13/22: Originality.ai was added to the list of AI content detection tools.

———-

As I’ve been sharing examples of sites getting pummeled by the Helpful Content Update (HCU) or the October Spam Update, I’ve also been sharing screenshots from tools that detect AI content (since some sites getting hit are using AI to pump out a lot of lower-quality content – among other things they were doing that could get them in trouble). And based on those screenshots, many people have been asking me which tools I’m using.

So, instead of answering that question a million times (seriously, it might be a million), I figured I would write a quick post listing the top tools I have come across. Then I can just quickly point people to this post versus answering the question over and over.

And note, I’m not saying these tools are foolproof. I have just found them to be pretty darn good at detecting lower-quality AI content. And that’s what we should be trying to detect by the way (not all AI content… but just low-quality AI content that could potentially get a site in trouble SEO-wise).

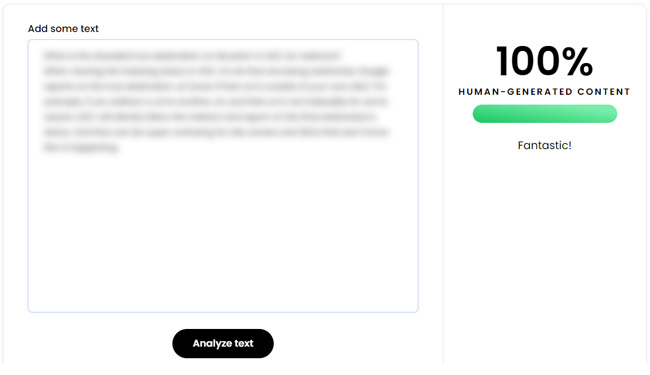

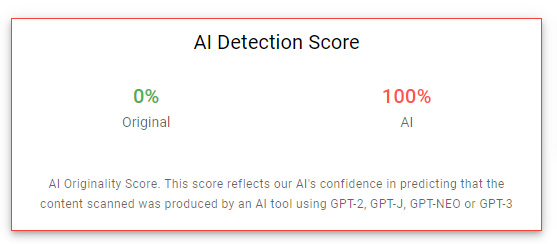

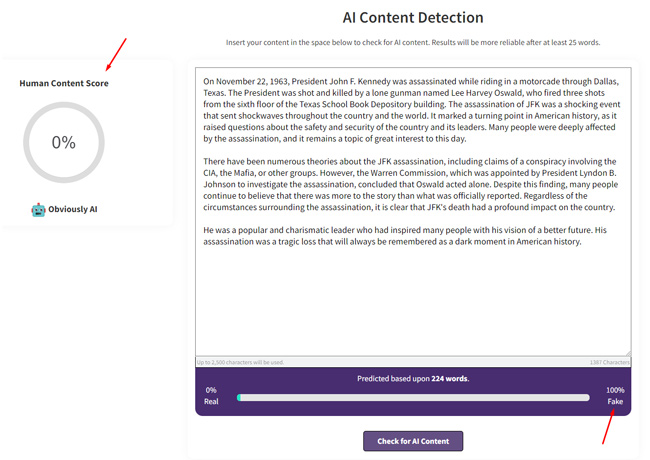

For example, here is high-quality human content run through a tool:

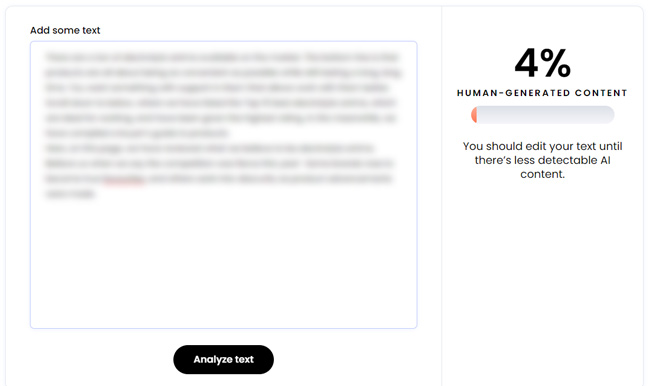

And here is an example of lower-quality AI content run through a tool:

Again, it’s not foolproof, but can give you a quick feel for if AI was used to generate the content. Below, I’ll cover my favorite AI content detectors I’ve come across so far. I’ll also keep adding to this list so feel free to ping me on Twitter if you have a tool that’s great at detecting lower-quality AI content!

Here is a list of tools covered in this post for detecting AI content:

- Writer’s AI content detector tool.

- Huggingface GPT-2 Output Detector Demo.

- Giant Language Model Test Room (GLTR).

- Originality.ai (AI content and plagiarism detection)

- Content at Scale’s AI content detection tool.

- GPTZeroX

- OpenAI’s AI Text Classifier

1. Writer’s AI content detector tool:

The first tool I’ll cover is from a company that has an AI writing platform (sort of ironic, but does make sense). Also, it seems like the platform is more for assisting writers from what I can see. You can check out their site for more information about the platform. Well, they also have a nifty AI content detector that works very well. You have probably seen my screenshots from the tool several times on Twitter and LinkedIn. :)

Update: 12/14/22 – While I was testing content created via GPT 3.5 and ChatGPT, I noticed that Writer’s detection tool was accurately detecting the content as created by AI. That was a change, since the tool was originally focused on GPT-2, so I quickly reached out to Writer’s CEO for more information. And I was correct! Writer’s AI content detection tool has been updated to detect GPT 3, GPT 3.5, and ChatGPT. So it’s now the second tool on the list that can achieve that.

AI content is progressing, but so are the tools. Below are some examples of using Writer’s AI content detection tool.

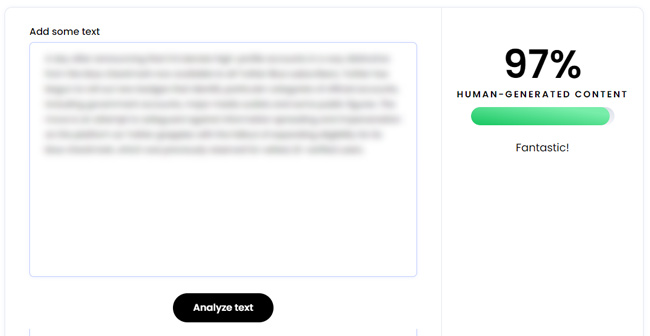

Here is Writer’s tool detecting higher-quality human content:

And here is Writer’s tool detecting content created via GPT-3.5 (using davinci-003, which is the latest model as of 12/14/22):

2. Huggingface GPT-2 Output Detector Demo:

If you’re not familiar with Huggingface, it’s one of the top communities and platforms for machine learning. You can check out their site for more information about what they do. Well, they also have a helpful AI content detector tool. Just paste some text and see what it returns. I have found it to be pretty good for detecting lower-quality AI content.

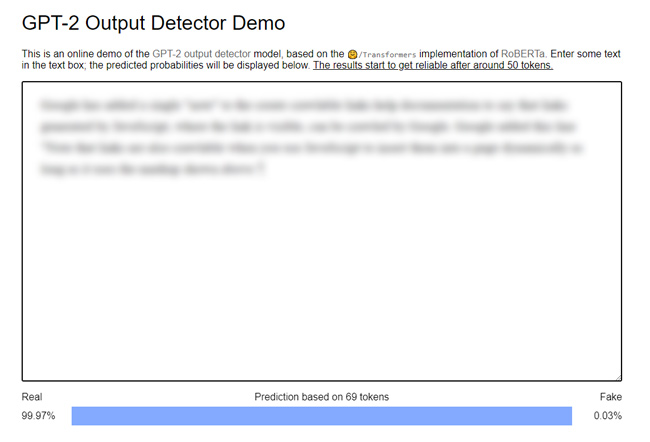

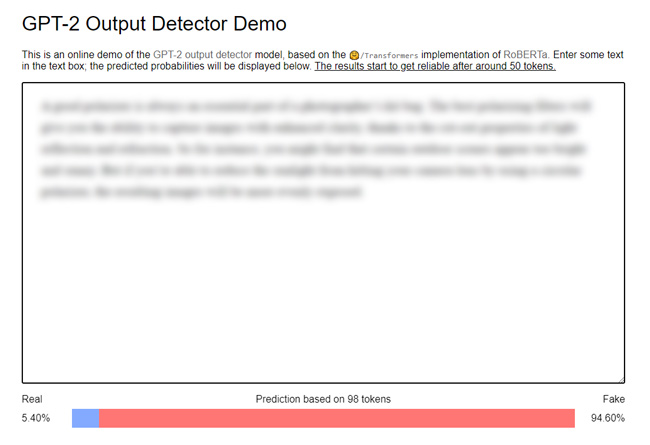

For example, here is Huggingface’s tool detecting higher quality human content:

And here is Huggingface’s tool detecting lower-quality AI content:

3. Giant Language Model Test Room (GLTR.io)

The third tool I’ll cover was actually down recently, but I had heard good things about it from several people (when it was working). It ends up there was a server issue and the tool was hanging. Well, the GLTR is back online now and I’ve been testing it to see how well it detects AI content.

The tool was developed by Hendrik Strobelt, Sebastian Gerhmann, and Alexander Rush from the MIT-IBM Watson AI Lab and Harvard NLP. It’s definitely not as intuitive as the first tools I covered, but once you get the hang of it, it can definitely be helpful.

How it works:

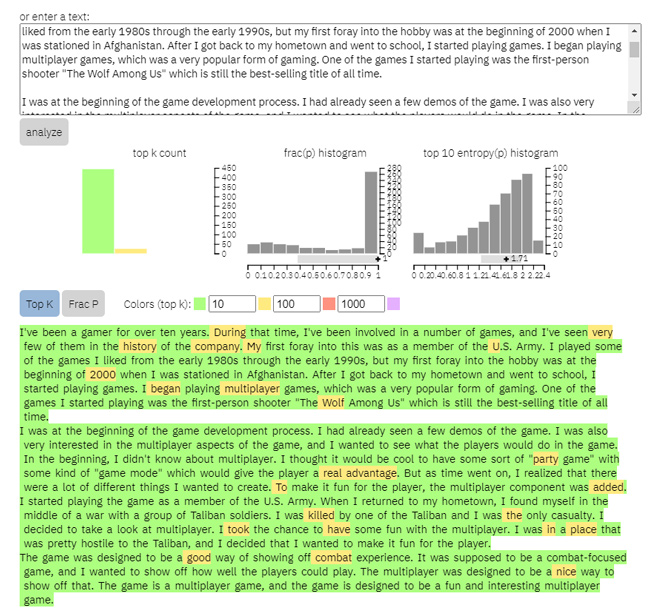

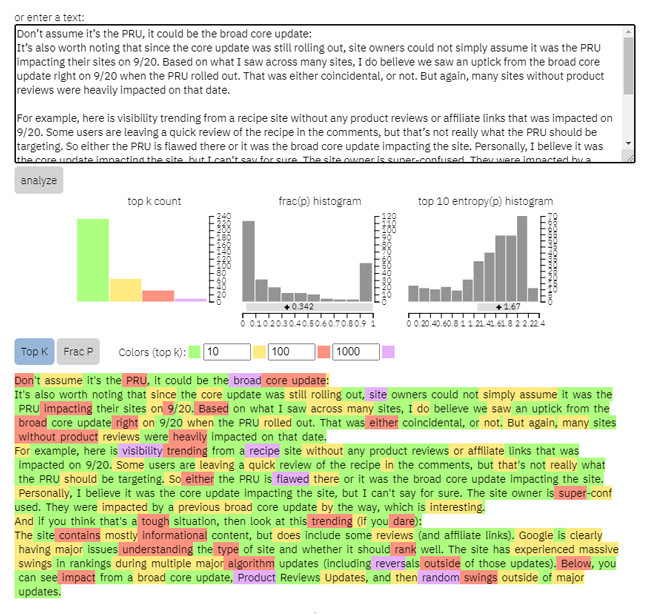

You can paste text into the tool and view a visual representation of the analysis, along with several histograms providing statistics about the text. I think most people will focus on the visual representation to get a feel for how likely each word would be the predicted word based on the word to its left. And that can help you identify if a text was written by AI or by a human. Again, nothing is foolproof, but it can be helpful (and I’ve found the tool does work well). To learn more about GLTR and how it works, you can read the detailed introduction on the site.

For example, if a word is highlighted in green, it’s in the top 10 of most likely predicted words based on the word to its left. Yellow highlighting indicates it’s in the top 100 predictions, red in the top 1,000, and the rest would be highlighted in purple (even less unlikely to be predicted).

The fraction of red and purple words (unlikely predictions) increases when the text was written by a human. If you see a lot of green and yellow highlighting, then it can indicate the text contains many predicted words based on the language model (signaling the text could have been written by AI).

Here are two examples. The first shows AI content (many words highlighted in green and yellow). This text was generated via GPT-2.

And here is an example from one of my articles about broad core updates. Notice there are many words highlighted in red, and several purple words as well (signaling this is human-written text).

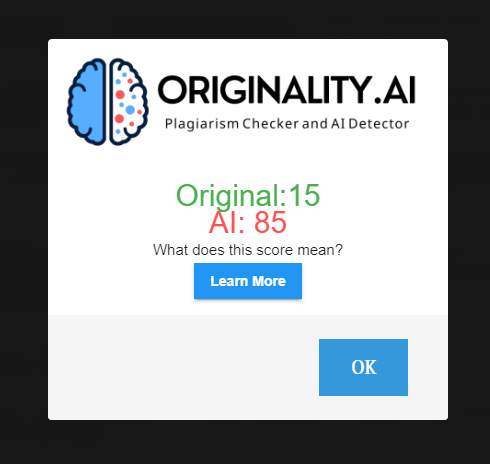

4. Originality.ai (for detecting GPT 3, GPT 3.5, and ChatGPT)

I was able to test Originality.ai recently and I’ve been extremely impressed with their platform. The CEO emailed me and explained they were one of the few tools to be able to detect GPT-3, GPT 3.5 and ChatGPT (as of December 13, 2022). Needless to say, I was excited to jump in and test out its AI content detection tool. Also, it’s worth noting that the tool can detect plagiarism as well (which is an added benefit). They have also released a Chrome extension and they have an API for handling requests in bulk. I’ll cover more about the Chrome extension below.

So, I fired up OpenAI and selected text-davinci-003 (the latest model as of 12/13/22) and started generating essays, short articles, how-tos, and more. I also used ChatGPT to generate a number of examples I could test.

And when testing those examples in Originality.ai’s detection tool, it picked up the work as AI every time. Again, I was extremely impressed with the solution.

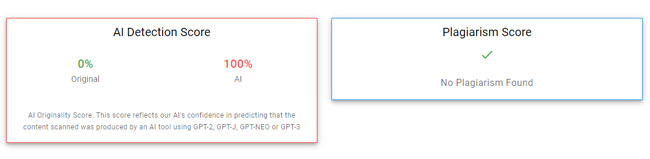

For example, here was a short essay based on GPT 3.5:

And here was a how-to containing several paragraphs and then a bulleted list of steps. I also checked for plagiarism:

It’s not a free tool, so you will need to sign up and pay for credits. That said, it’s been a solid solution based on my testing. Note, they are providing a coupon code (BeOriginal) that gets you 50% off your first 2000 credits. One credit scans 100 words according to the site.

Originality.ai Chrome Extension:

I mentioned earlier that Originality.ai has both a Chrome extension and an API. The Chrome extension enables you to highlight text on a page in Chrome and quickly check to see if it was written by AI. You must log in and use the credits you have purchased, so it’s not free. It works very well based on my testing so far.

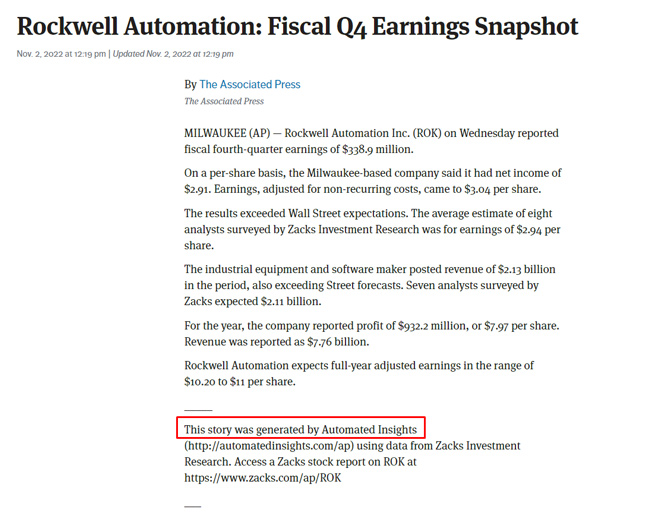

For example, here is an article created via Automated Insights. By highlighting the article text, right clicking, and selecting Originality.ai in the menu, you can check to see if the content was created by AI.

5. Content at Scale

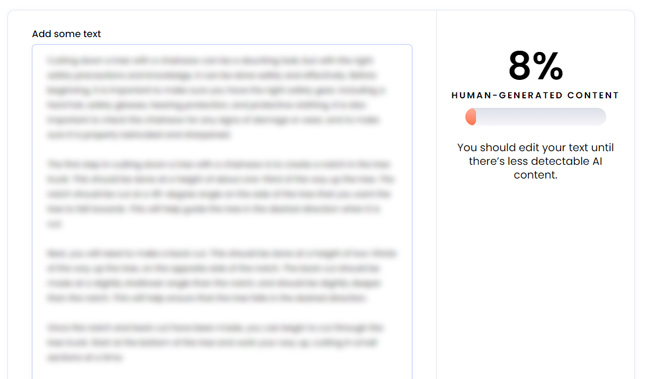

Next up is an AI content detector tool from Content at Scale. Like Writer, they provide a platform for AI content generation that uses an interesting approach. You can read more about the platform on their site. But, like Writer, they also have an AI content detection tool. You can include up to 2,500 characters and the tool will analyze the text and determine if it’s AI content or human content. And like Originality.ai and Writer, it can detect GPT-3, 3.5, and ChatGPT.

For example, here is the tool detecting AI content generated by ChatGPT (a short essay):

And here is the tool detecting content from one of Barry’s blog posts as human:

6: GPTZeroX

Next up is a new AI content detection tool created by a Princeton University student! And it’s causing quite the buzz. I’ve read a number of articles across major publications about Edward Tian and his tool called GPTZero, which works to detect if content was written by ChatGPT.

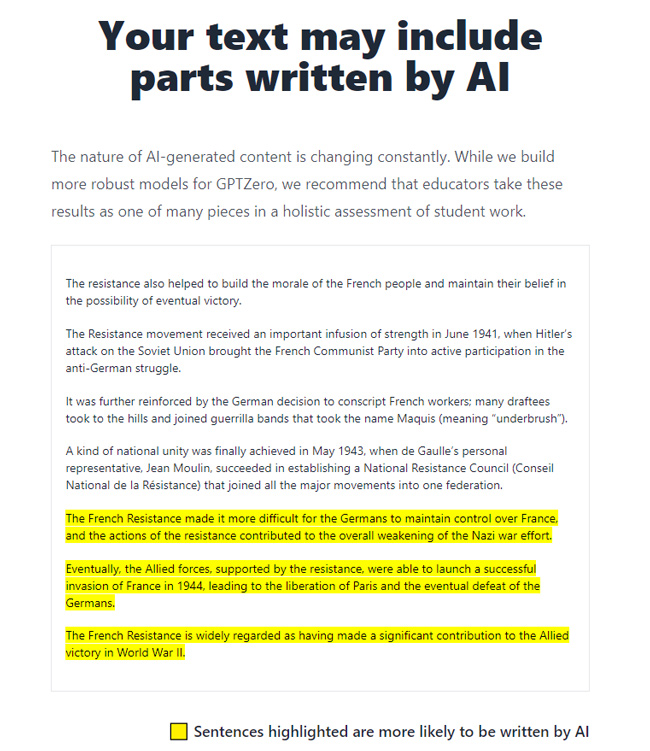

{Update: 2/1} GPTZeroX was just released and can highlight which parts of the text being tested is AI-generated. It’s more granular with its detection, which was a top feature that Edward Tian heard from educators.

Beyond that, Edward explains that “GPTZeroX also supports larger text inputs, multiple .txt, word, and pdf file uploading, and lightning-fast processing speeds.” There is also an API now that can handle high-volume requests.

Here is GPTZeroX detecting a part of text as AI-generated:

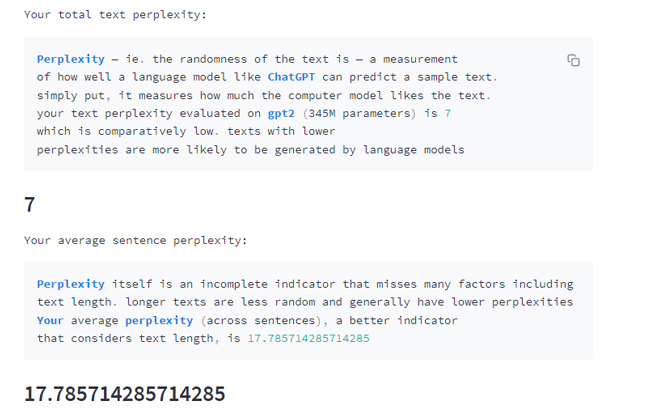

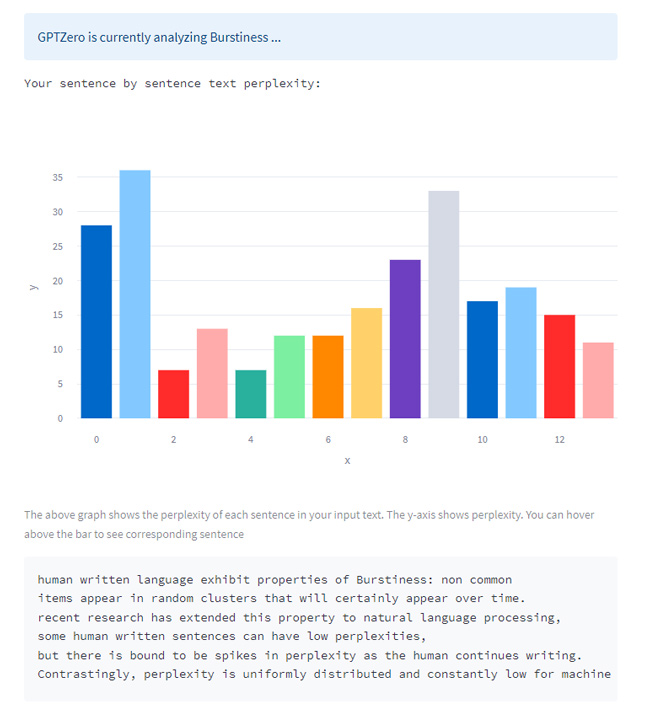

With GPTZero, Edward’s approach is interesting, since it uses “perplexity” and “burstiness” in writing to detect if a human or AI wrote the content. “Perplexity” aims to measure the complexity of the content being tested, or what Edward explains as the “randomness of text”. And “burstiness” aims to measure the uniformity of the sentences being tested. For example, Edward explains that “human written language exhibits non-common items appearing in random clusters.” Humans tend to write with more burstiness, while AI tends to be more consistent and uniform.

I’ve been testing the tool over the past few days, and it has worked well (and has been pretty accurate). The site has definitely had some growing pains since launching, since I’m sure Edward didn’t think the tool would become so popular that quickly, but site performance has improved greatly recently. Also, the homepage now explains he is creating a “tailored solution for educators”. I’m eager to hear more about that, but for now, you can add GPTZero as yet another tool in your AI detection arsenal. I think you’ll like it.

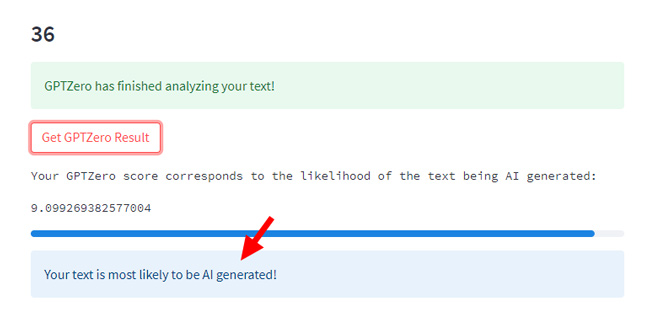

For example, here is the tool measuring “perplexity” and “burstiness” of content (based on an essay written by ChatGPT):

And here is the final result accurately detecting AI content written by ChatGPT:

7. OpenAI’s AI Text Classifier

Update July 2023: OpenAI made the decision to remove its AI content detection tool on July 24, 2023 based on low accuracy. You can read more in the post from TechCrunch. The tool now 404s, so I removed links to it from this post.

—

Well, this was an interesting development! OpenAI, the creator of ChatGPT, just released its own AI content detection tool. And as you would guess, it can detect when a piece of content was written by ChatGPT (like several other tools in my post). Based on my testing, it works well (when taking direct output from ChatGPT and testing it). Like other AI content detection tools, it’s not foolproof, but does seem to catch a number of examples of AI content that I tested.

Note, it requires a minimum of 1,000 characters of input and provides one of five responses:

- Very unlikely to be AI-generated.

- Unlikely to be AI-generated.

- Unclear if it is AI written.

- Possibly AI-generated.

- Likely AI-generated.

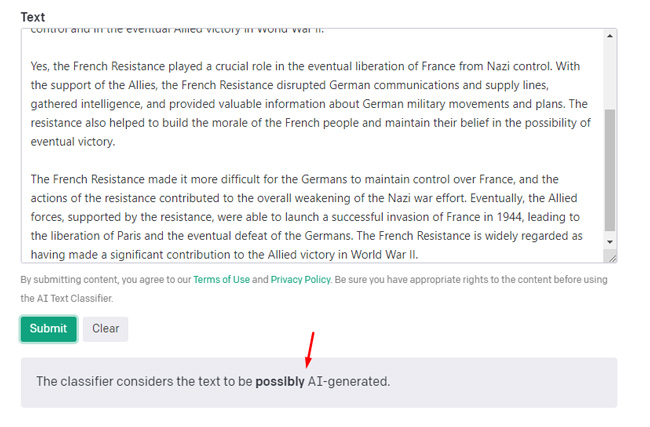

Here is a quick example based on an essay I created via ChatGPT. As you can see, it’s accurately being detected as AI content:

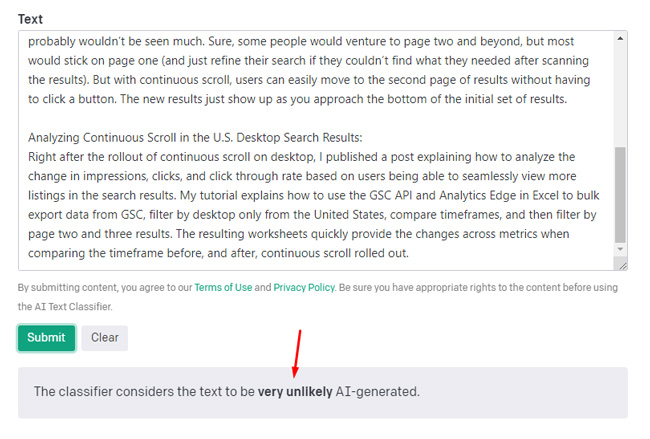

And here is an example of OpenAI’s tool accurately detecting a blog post of mine as human:

Summary: Although not foolproof, tools can be helpful for detecting AI content.

Again, I’ve received a ton of questions about which tools I’ve been using to detect lower-quality AI content, so I decided to write this quick post versus answering that question over and over. I hope you find these tools helpful in your own projects. And again, if you know of other tools that I should try out, feel free to ping me on Twitter!

GG