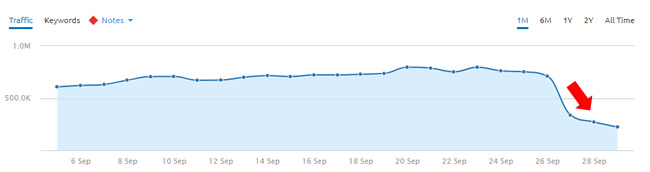

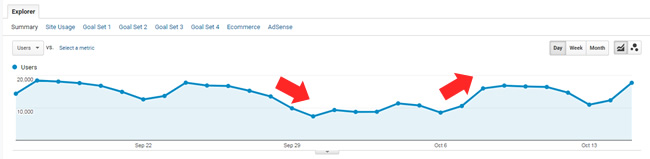

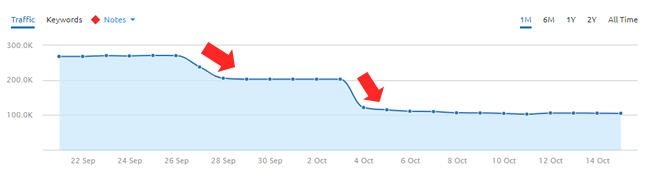

I was asked on September 26 whether or not I had seen another significant update after the August 1, 2018 update. That was a massive update and many sites were impacted across the web. Well, I said we were due, but didn’t realize how close we actually were. Just one day later on September 27, we saw another significant update that impacted many sites across the web.

And that update was followed by much more volatility on October 4, which also yielded some wild swings, reversals, and more. It’s been a crazy time algo-wise, which led Barry Schwartz, and then me, to ask Danny Sullivan if he could provide any more information about what we were seeing. He didn’t respond directly to us, but it was great to finally hear from him with a thread of tweets about the update. In that thread, Danny explained that the update started the week of September 24 and could take a week or more to fully roll out. After digging into sites that were impacted, I saw the first signs of impact late on September 26, with more much volatility on the 27th.

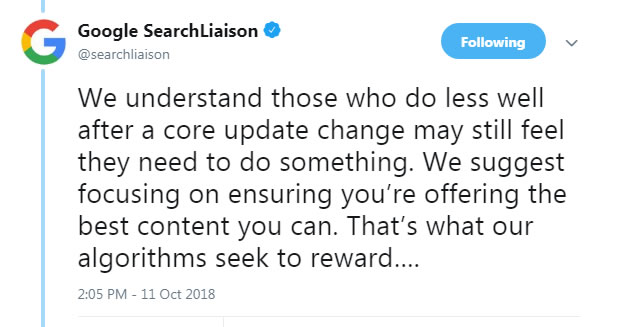

Here’s a section of Danny’s tweet thread about the 9/27 update:

This year, we shared about two broad core algorithm updates we had: in April and August. We also had a further update we can confirm, one that began the week of Sept. 24. With any broad core update, the full rollout time might be over the course of a week or longer….

— Google SearchLiaison (@searchliaison) October 11, 2018

Between the August 1, September 27, and then October 4 volatility, I have a spreadsheet containing a few hundred sites that were impacted, with many seeing significant volatility. For example, some sites are down 60%+ since 9/27 while others surged at the same pace in the other direction. Imagine losing 60% of your traffic overnight (or more)…

It’s one of the reasons John Mueller explained in a hangout that Google might be looking to limit the volatility on sites that are seen by Google’s algorithms as less relevant, which can result in a drop in rankings.

And watch that whole segment here if you are interested in hearing more (at 32:31 in the video): https://www.youtube.com/watch?v=NYAup40MeTM&t=32m31s

In Danny’s tweets, he once again explained that site owners should look to the quality rater guidelines (QRG) to learn what Google’s algorithms are looking for. He explained that Google is looking to rank the best content and the QRG provides many examples of what Google’s algorithms are looking for from a quality standpoint.

I’ve recommended reading the QRG many times in my posts about major algorithm updates, dating back to 2014! But let’s face it, the QRG focuses on many different topics. So, those looking for a smoking gun with the latest updates aren’t going to find just one in there. You might find twenty, and across topics. I’ll cover more about what I’m seeing with this update below, but my core recommendation hasn’t changed from the August 1 update (or previous major updates)… Focus on ALL of your problems. Surface all quality problems on your site, and off, and fix them all.

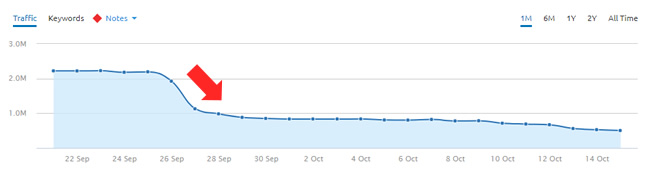

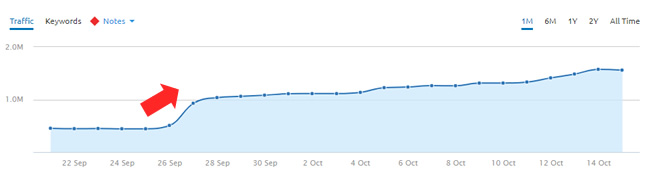

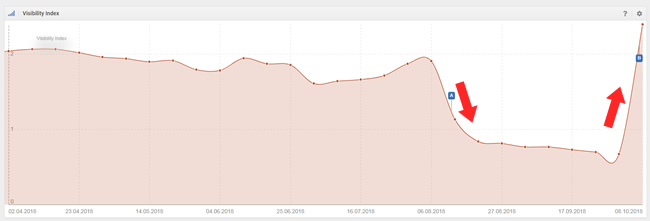

Just to show some of the volatility I’ve seen across sites, here are some examples of surges and drops in search visibility during the September 27 update. This often correlates well to increases and decreases in organic search traffic:

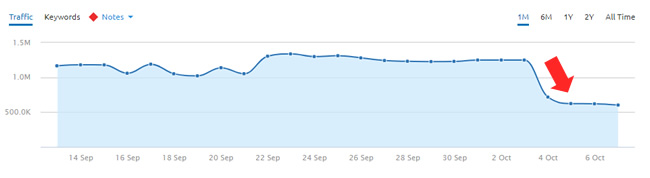

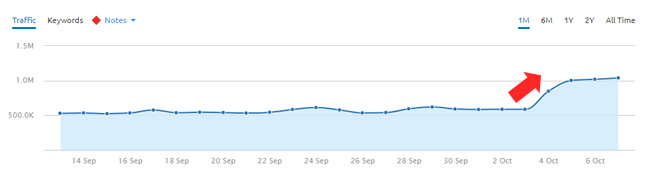

And here are some swings based on the October 4 volatility (which extended to 10/8 and beyond):

Reversal Craziness:

One really interesting thing we saw with the September 27 update was the amount of reversals from the 8/1 update. In addition, there were also micro-reversals on 10/4 from the 9/27 update! Some of those reversals from the 8/1 update absolutely could have been from changes being implemented by site owners, while other sites seemed to reverse for no apparent reason. And regarding the 10/4 reversals from the 9/27 update, that’s clearly a quick tweak to the algo (or what I’ve been calling tremors for a long time). Both sets of reversals lead you to believe that Google is testing something new in the algo. For example, a new trust element, a tweak to inbound links, etc.?

Remember, for evaluating E-A-T, Google primarily looks at mentions and links from well-known sites. If they simply tweaked the power of those signals, then that could have a major impact on rankings. I’m not saying that’s the case, but it could be.

Update 10/23/18: I just published a short article on LinkedIn explaining more about the link situation. After analyzing many sites within specific niche categories, and comparing their link profiles, I think it’s very possible that Google dialed-up, or down, the power from “trusted” and/or “untrusted” links. You should read my article to learn more about that. It would be the most scalable way to tackle “trust” (and globally).

Anyway, here are some reversals (including some sites that reversed on 10/4 after surging or dropping on 9/27):

And here is a micro-reversal (a site reversing course on 10/4 after a drop on 9/27):

In this post, I’ll cover what I’m seeing based on analyzing a number of sites impacted by the update. That includes case studies, relevance, quality, trust, reversals and micro-reversals during 9/27 and then 10/4, head-scratching findings that make you wonder what the heck Google is actually doing with these updates, and more. Then I’ll end with some advice for site owners that have been impacted.

Let’s start with some interesting case studies:

Case 1: Reversal from the August 1, 2018 update:

The first case I’m going to cover is a great story. It’s a family-run business that got smoked by the 8/1 update. They reached out to me for help in August, but I unfortunately didn’t have time to take them on as a client. It ends up they read my post about the August 1 update and took it to heart. They decided to take a hard look at their site, surface all quality problems (including technical SEO issues impacting quality), and fix them.

I received an email shortly after the 9/27 update explaining their situation. The site owner explained that they objectively reviewed their site, found a number of problems that had been listed in my August post (and previous posts about major algorithm updates), and then implemented a bunch of those changes.

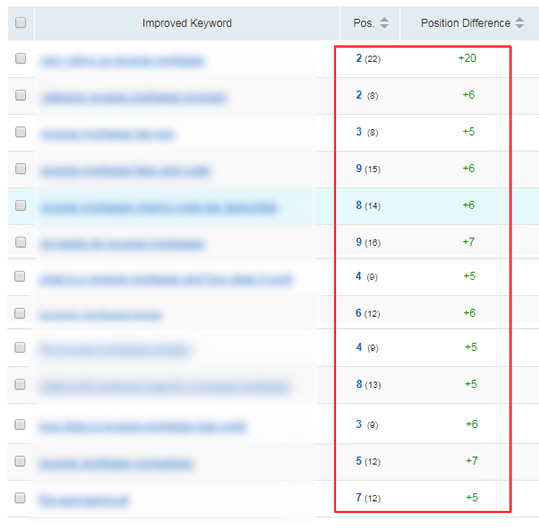

Then the September 27 update rolled out and they completely reversed. Actually, the site owner told me that they even gained more visibility than where they were prior to the August 1 hit (gaining additional keywords that they weren’t ranking for prior to 8/1). It’s a great example of what I’ve been saying all along. Surface your problems and fix them all as quickly as you can. That includes content quality, user engagement, technical SEO, aggressive advertising, and more.

Here’s a snapshot of some rankings changes after the 9/27 update:

Case 2: A Fascinating, Yet Disturbing, Site Change And How Site Trust Plays A Role

In early summer, a client of mine decided to pull the trigger on a significant site change. It was a risky move in my opinion, but it was for the right reasons from a user perspective. I can’t explain too much about what they did (at least yet), but let’s just say that for the category of site, and the history of the domain, it was a bold move.

Well, three days after they pulled the trigger, they got absolutely crushed. I mean obliterated. They lost over 70% of their Google organic traffic overnight.

Here’s the catch. Overall, it was a relatively clean site change. Sure, there are always issues with big changes on a large-scale site, but nothing stood out as terrible. So, the drop was disturbing (yet fascinating) to analyze.

Again, I can’t go into too much detail here, but let’s just say that it really looked like Google’s algorithms didn’t trust the site change. In other words, the change could have looked like something funny was going on… There were no changes content-wise, no UX changes, no advertising changes, etc.

As time went on and traffic was anemic I had several stressful, yet important, calls with my client. There were some serious decisions to make about how to proceed. Since the move was the right one for users, we decided to keep the changes in place hoping that Google would eventually trust the changes and traffic would surge back.

Almost four months went by and the site looked like it might be coming back at several points (including during the 8/1 algorithm update), only to fall back down again a few days later. It was like Google was testing the site with more traffic, but just for a short period of time. There was clearly some type of trust dampening going on algorithmically. Again, this was both disturbing and fascinating at the same time.

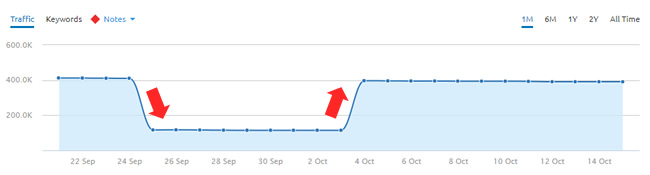

And then the September 27 update rolled out, and BOOM, traffic absolutely spiked. It was amazing on multiple levels. First, our decision to stay the course paid off. Second, it was absolutely fascinating to see how trust played a part in the algorithm update (and for this site based on the changes it implemented in early summer).

There was clearly some type of trust signal that was finally tripped (maybe based on time, maybe the improvements they had made prior to the site change helped, maybe a combination of both, or more). But the update rolled out and my client surged back in a big way.

I wish I could explain more about this case, since I know there will be questions, but I can’t provide more details for now. Just know that trust (algorithmically evaluated) had something big to do with the surge during the 9/27 update.

Case 3: Kicked While Down, Then Helped Up – A Micro-Reversal For A YMYL Health Site

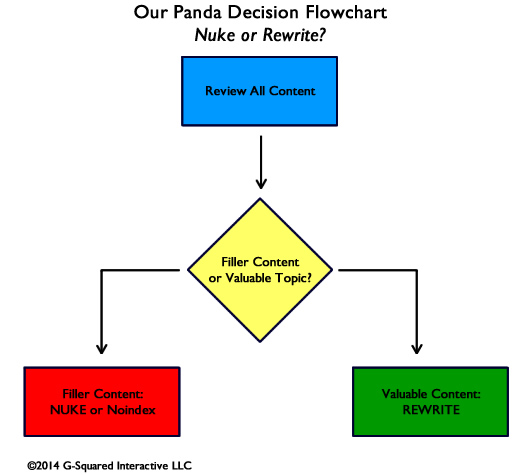

The next case is a good example of a site getting hit more after a major update. The site, which is a YMYL health site, got hammered during the 8/1 update and then saw even more downward movement during the 9/27 update. The site has been moving to make big changes as quickly as they can since the 8/1 update, including implementing some significant changes from a content standpoint. They are aggressively going through the boost, nuke, or noindex process that I’ve covered before on my blog.

Here is a quick flowchart from medieval Panda times:

The site lost 30% of its traffic during the 8/1 update and then another 20% of its traffic during the 9/27 update. And add the content cuts that are happening across the site (where some of those pages were still ranking even though they weren’t necessarily high-quality), and you have the perfect storm from a traffic-drop perspective.

But then 10/4 arrived, and the drop from the 9/27 update completely reversed. They were back to where they were prior to 9/27. It was crazy to see. It seemed that Google tweaked the algorithm and some sites that were impacted on 9/27 completely reversed. Not all sites hit by the 9/27 update reversed, but clearly something was refined algorithmically and some did reverse course. Just an interesting side note.

The site clearly didn’t improve in quality over a few-day period… This was a clear tweak implemented by Google from an algo update standpoint that resulted in some micro-reversals.

It’s a great example that reinforces you are not in control. Google is, and it can tweak algos as many times as it wants. And your site can be on that roller coaster, whether you buy a ticket for the ride, or not.

Trust and Reputation – Don’t ignore them

Ever since the 8/1 update, there’s been a lot of talk about E-A-T, trust, etc. After analyzing many sites that were impacted by both the August and September updates, Google did seem to dial up some signals related to trust, and then tweak those signals. It’s fascinating, but we’re still not really sure how they are handling that algorithmically.

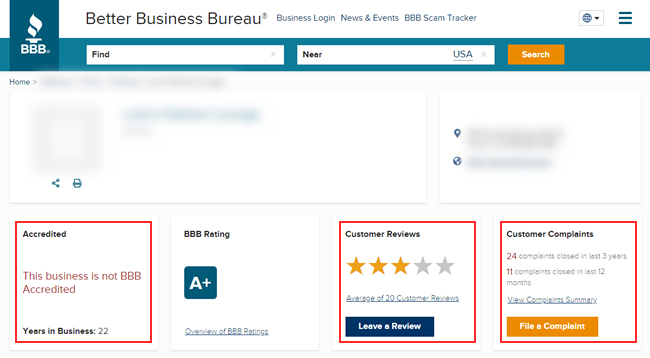

Gary Illyes did explain that for E-A-T, Google was primarily looking at mentions and links on well-known sites, so any tweak in how they handle that could impact rankings in a big way. Marie Haynes wrote a post about the latest update and explained that she thought reputation and trust issues played a big part. For example, she provided an example of a site that had poor Better Business Bureau (BBB) ratings that tanked during the 8/1 update. She also mentioned reviews and ratings on other sites that Google might trust. You should read her post. There are some interesting points Marie makes.

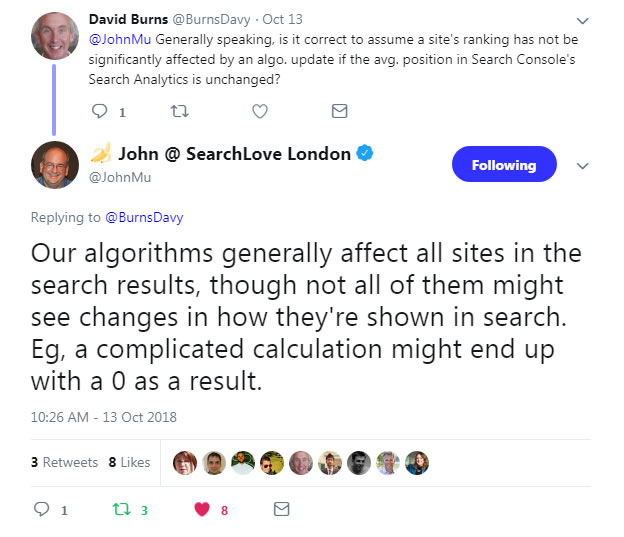

I’ve seen some sites definitely line up with that finding, but others that don’t. And that makes sense, based on the sophisticated calculation Google is using with these updates. One signal will not kill your site. But a combination of signals absolutely could. Here’s a great tweet from John Mueller explaining that complicated calculations could yield no changes in rankings:

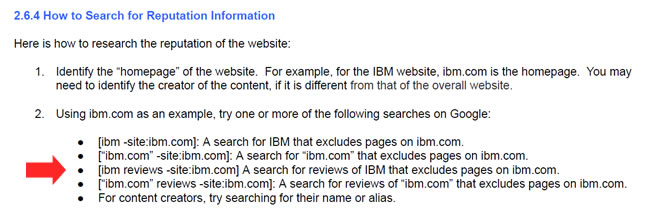

And remember, checking the BBB is not all the QRG says to do with regard to researching reputation. They also explain to research a site’s reputation by searching for reviews of the site across the web (using advanced search operators). For example, searching for domain.com -site:domain.com. You can also add “reviews” to the query.

Update November 14, 2018: Google’s John Mueller Confirms BBB Ratings Aren’t Used Algorithmically

I’ve been pretty vocal recently that I don’t believe BBB ratings were being used algorithmically by Google. It just didn’t make sense to me. First, the BBB is only in three countries (and really one primary country – the United States). Since Google’s core ranking algorithm is global, it doesn’t make sense that Google would use a limited source like that. Second, there’s a paid element to the BBB. You don’t have to pay, but you can pay to become accredited. That ranges from $480 to $1,155 per year depending on the size of your company. That also throws a wrench into Google using BBB ratings. And third, it’s just not scalable. Remember, we’re talking about global algorithms and NOT just algorithms focused on the United States (or a limited set of countries).

So, I asked Google’s John Mueller in a webmaster hangout if Google was using BBB ratings algorithmically. He was pretty clear with his response. No, they aren’t using BBB ratings algorithmically. He said there are various issues with sources of information for companies and Google can’t blindly rely on some third-party ratings. You can watch the video below at 15:32 to hear his full response.

Now, that doesn’t mean they aren’t looking at site reputation overall. There’s a good chance they are… But they wouldn’t rely on one source or use a score from one site algorithmically. Actually, I posted an update earlier in the document from 10/23/18 where I explained how Google might be dialing-up, or down, the power of “trusted” or “untrusted” links. That’s much more likely and way more scalable. You should read that section (and the LinkedIn article I published to learn more about that).

Emulating Quality Raters, Always A Smart Move:

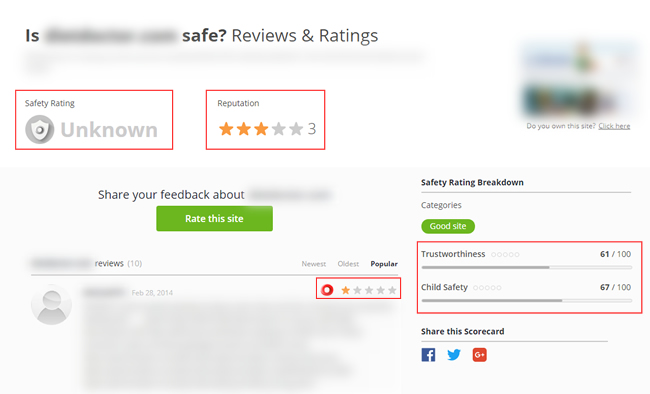

When checking several sites that were negatively impacted by the update, I did find a number of negative reviews across sites like Web of Trust, Better Business Bureau, and other sites that enable users and customers to review businesses. I have no idea if Google is taking any of that into account algorithmically, but it’s worth noting that I surfaced that quite a bit. And again, Google asks raters to do this when evaluating algorithm changes. But again, not all sites lined up with this finding. Some had strong reviews, but dropped, while others had negative reviews and surged. Here are two sites with negative reviews that did drop (BBB and Web of Trust):

Poor reputation on web of trust:

Also, Google’s John Mueller has explained that Google IS NOT researching every author’s reputation on the web. Raters might be doing this when evaluating algorithm changes, but that’s just feedback sent to the engineers. Then the engineers tweak their algorithms. So again, reputation research might not be directly impacting rankings, but it sure could be indirectly impacting them.

Whether Google is evaluating that information in aggregate, or if it’s identifying E-A-T in other ways, I think it’s really important to build a strong reputation in your niche.

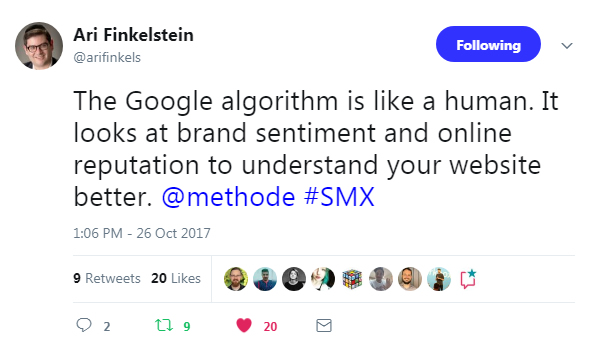

Does A Quasi-Human Algorithm Yield Better Search Results?

Google’s Gary Illyes explained at Pubcon last year that Google’s core ranking algorithm is becoming more human. He was speaking about sentiment, but I think that statement spoke volumes. An algo that’s more human-like makes complete sense and can help us understand what’s going on algorithmically. Creating a quasi-human is the best way to reproduce what a real person experiences in the SERPs and while visiting sites across the web. But that’s clearly not easy and can yield problems…which of course can yield wild volatility and reversals as Google refines the algo. Again, just another interesting side note as we’ve seen crazy volatility and some reversals with the latest updates.

“Stay in your lane” – Health and Medical – YMYL (again)

With the 8/1 update we saw a lot of health and medical sites impacted (which is why Barry Schwartz named it the “Medic Update”). Well, there was more significant volatility in this niche again during the 9/27/18 update, with some sites dropping or surging more, while others reversed course.

When digging into the drops and surges, there were some clear cases of sites “not staying in their lane”. I’ve been using this expression a lot recently with companies I’m helping. For example, don’t write about topics you have no right to publish content about. Google is clearly trying to surface results from experts when it comes to YMYL topics and doesn’t want to surface content from someone without that necessary expertise.

Remember, YMYL sites and content could “impact the future happiness, health, financial stability, or safety of users”, which is a quote directly from the Quality Rater Guidelines (QRG) about YMYL content.

If you focus on non-YMYL topic X and you suddenly start writing about serious health subjects like cancer, and other sensitive medical issues, then you are crossing lanes and could run into problems for sure (like a head-on collision with Google’s core ranking algorithm). And even if you focus on health and medical, try to stay in your lane. Don’t cross over too much into other sensitive areas unless you build that expertise.

Here is search visibility trending for a site that didn’t stay in its lane. Beware:

Relevance (which INCLUDES quality) – Tackle Everything

I’m beating a dead horse here… This is something I’ve covered many times in my posts about Google’s core ranking updates, but it’s worth repeating again.

Google’s John Mueller has been explaining that broad core ranking updates are about relevance. For example, Google’s algorithms might view certain sites and content as more, or less, relevant for a certain query over time. Its algorithms are essentially trying to determine which sites and content should rank now for specific queries.

Well, the web changes, people change, intent can change, etc. And as Google’s algorithms see those changes, then they must adapt too. That’s when certain sites can drop and others can surge. Well, I saw many examples of relevance adjustments again with the 9/27 update.

Also, and this is extremely important, Google’s John Mueller has explained that relevance INCLUDES quality (see the video below). I’ll cover more about quality next, but don’t sit back and think that a certain update has completely killed your efforts to regain those rankings. As I’ve covered many times before, John has explained that you should significantly improve quality over the long term if you want to see positive movement during subsequent updates. That’s what Google wants to see and it’s what I’ve seen in the field while helping companies with major algorithm updates.

And regarding seeing positive movement, you won’t surge back in a week or two, and you probably won’t in a month or two either. It typically takes months of hard work to significantly improve the quality of your site, including improving content, UX, technical SEO, aggressive or deceptive advertising, and more.

As a quick example, a site that recently dropped during the 9/27 update noticed that its blog posts that used to rank for e-commerce head terms no longer ranked as highly. And the pages and sites that took its spot were e-commerce category pages from larger and more powerful sites. That’s a good example of a relevance adjustment. Google determined that the blog posts weren’t what users were looking for anymore. So, they moved other listings up that they believed would be better for users.

Of course, that begs the question about how can the site that dropped get their own e-commerce category pages to rank? Based on what I was seeing, the quality of the site overall was not strong enough compared to the competition. The blog post was great for that specific topic, but they will have to significantly strengthen their site overall to compete with powerful players in their niche to get category pages ranking for those queries. That’s a good segue to quality.

Quality – Content, UX, Aggressive Advertising, Technical SEO, and more

I’ve covered this at length many, many times before. You can read my posts about Google algorithm updates to learn more about all of the potential quality issues that can be impacting your site. Well, I saw many of the usual suspects again while analyzing the 9/27 update.

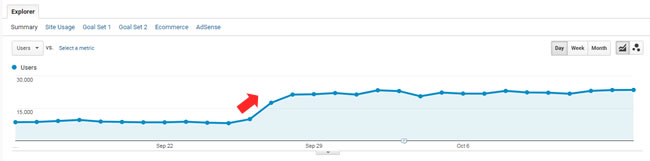

I won’t go crazy explaining everything I surfaced since I’ve covered this heavily before. That said, I’ll provide a great example from the 9/27 update. One site that got crushed (non-YMYL) had many quality problems. For example, aggressive and deceptive ads, low-quality and thin content, technical SEO issues causing quality issues, and more. Here is what happened during the 9/27 update:

Again, I saw many examples of quality problems across sites impacted by the 9/27 update and 10/4 tremor. Don’t overlook these issues… they can cause big problems down the line.

My Advice – Importance Of Performing An SEO SWOT Analysis

With Google’s wild updates recently, I believe it’s never been more important to fully understand your risks, surface all the problems riddling your site, and take significant action. You can think of this as a SWOT analysis SEO-style. Site owners shouldn’t just look for a single smoking gun, since there’s almost never just one. They should look for a battery of smoking guns, prioritize them, and start knocking them off one by one (and as quickly as possible).

But that’s only one piece of the puzzle. The other piece is objectively analyzing your current rankings and traffic and determining where certain risks lie. For example, should your blog post rank for a competitive head term? How can you improve other content on your site (or create new content) that would be a better fit?

Are there major gaps in rankings compared to your competition? Do you even have content that addresses those topics? How strong is that content? Is it 10X better than what’s ranking now?

Again, find all quality problems and fix them, but also understand what you’re ranking for now and objectively determine if you should rank for those queries. Is your content relevant?

Also, you should always be focusing on user (and customer) happiness. This impacts site reputation across a number of trusted places on the web (some of which Google may be looking at when it evaluates trust). Remember, Google asks quality raters to research the reputation of sites across the web. You should too, and you should obviously be looking to help customers that weren’t happy with your services or products. Address complaints directly, resolve issues, and good things can happen. Good karma wins.

A quick example: The most powerful comment that drove change.

Don’t forget about the power of user studies. As I’ve said many times before, you never know what you’ll hear after having users objectively go through your site while trying to accomplish a task. And I mean objective users, not your family, friends, and coworkers.

And if you can’t run a user study for some reason, then some user feedback might be right under your nose. Comments and emails can be a proxy for user studies. You can learn a lot from users that decide to reach out to you (either on the site or via email). And some of that feedback is sitting out there on the web right now. Check your own comments, check third-party review sites, and more. That’s what quality raters are doing by the way.

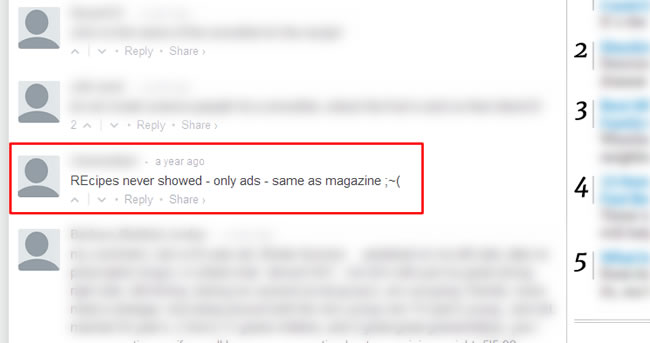

As a quick example, here is what I found on a site I was auditing after they got pummeled by a major algorithm update. I was literally banging my head against my monitor based on the frustrating user experience, horrible and aggressive advertising situation, excessive monetization tactics, and more, when I came across this comment. It was so fitting:

This was the perfect compliment to my deliverable about aggressive and deceptive ads. It spoke volumes… Therefore, mine your own data. You never know what you’ll find.

Summary – Think holistically about your site changes.

The September 27, 2018 algorithm update was another big one that followed a massive update in early August. Google is clearly testing some new signals, refining its algo, etc., which is causing massive volatility in the SERPs. I hope my post shed some light on what’s been going on with the 9/27 update and 10/4 tremor and their connection with the August 1 update. For site owners that have been impacted, I highly recommend objectively digging into your site, running user testing, addressing user (and customer) happiness, and creating a strong plan of attack for improving your site overall.

This fall has been a wild ride so far and I’m not sure we’re done yet. Remember, I called last fall “The Hornets’ Nest“. I wonder if this fall will match it.

GG