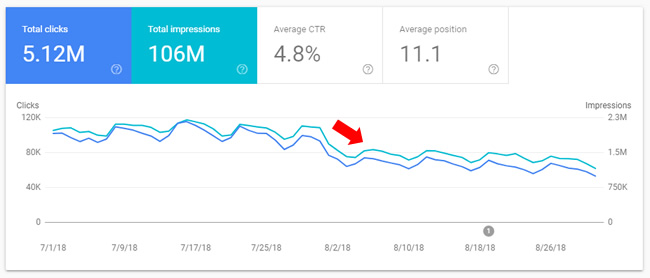

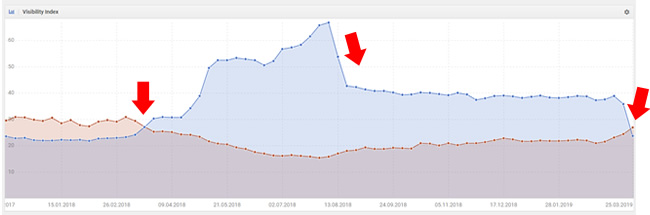

In 2018, we saw three broad core ranking updates that caused massive volatility in the search results globally. Those updates rolled out in March of 2018, August of 2018 (Medic Update), and then late September of 2018. All three were huge updates, which sent some sites dropping off a cliff and others surging through the roof.

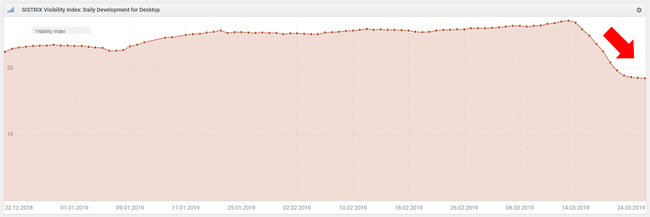

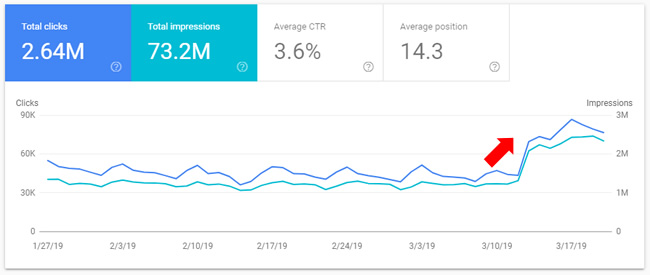

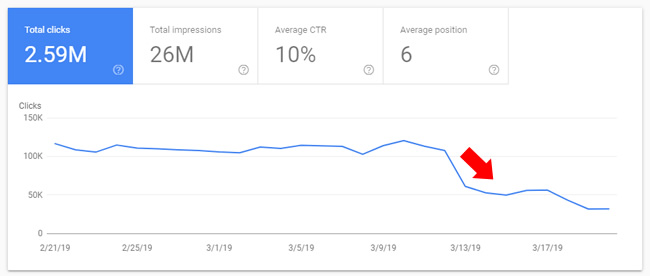

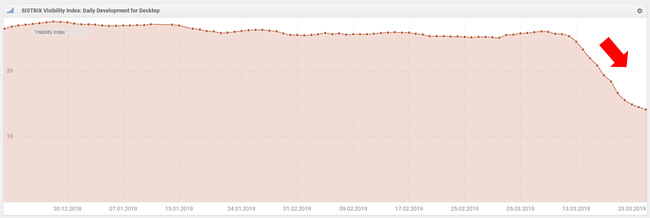

Since late September/early October of 2018, we have been waiting for the next big core update. And it finally arrived on March 12, 2019. And the update didn’t disappoint. Once again, there was a ton of movement across sites, categories, and countries. It didn’t take long to see the impact. For example, here is a massive surge in search visibility and a large drop all starting on March 12.

Although there was a lot of movement (and chatter) about the health/medical niche again, the update clearly impacted many other categories (just like the Medic update did). For example, I have many sites documented that saw significant movement in e-commerce, news publishers, lyrics, coupons, games, how-to, and more.

Note, I cover a number of topics in this post. To help you navigate to each section, I’ve provided a table of contents below:

- Google’s comments about the March 12, 2019 update.

- A full Medic reversal? Not so fast…

- A softening of the Medic Update.

- Tinkering with trust via the link graph.

- Examples of sites that surged.

- Examples of sites that dropped.

- A note about site reputation, reviews, and ratings.

- Taking a “kitchen sink” approach to remediation.

Google’s comments about the March 12 Core Update: Reversals, Neural Matching, and Penguin

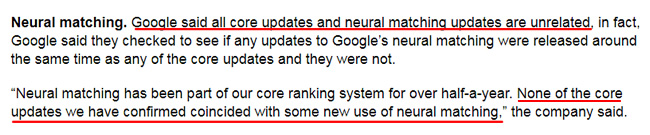

Before I get into my analysis, it’s important to know that Google provided some information about the March 12 update to Barry Schwartz at Search Engine Land. Google explained that the latest update on 3/12 wasn’t a full reversal. That makes complete sense based on what I’m seeing. More on that soon.

Google also explained that all of the recent core updates (including this one) had nothing to do with any neural matching updates. They checked each of those updates and NONE lined up with these core updates. That’s incredibly important to understand since there are some that believe the updates had a lot to do with neural matching, which is an artificial intelligence method designed to help Google connect words to concepts.

The example they provided last fall when they mentioned neural matching demonstrated that you could search for what the soap opera effect was by describing it in your query, and Google could know what you were referring to. If you want to learn more about neural matching, Google just provided information about the differences between neural matching and RankBrain

Google also explained that these updates had nothing to do with Penguin. Now, that doesn’t mean Google isn’t evaluating links as part of this update… it just means that Google’s Penguin algorithm didn’t have anything to do with the March 12 update. More about links soon.

A Full Medic Reversal! Not so fast…

Based on the impact, it was easy to see that many sites that were impacted by the August update (Medic) saw a change in direction. And some saw a radical change in direction, which led some to believe that the March 12 update was a complete reversal of Medic. That’s definitely not true based on the data I’ve been analyzing (and what many others have seen as well).

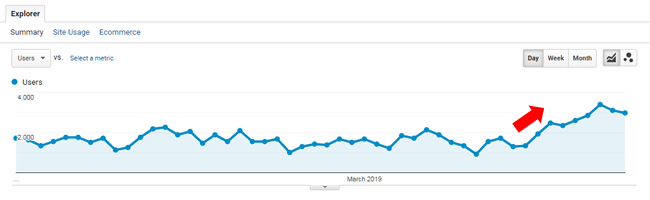

For example, there are definitely sites that surged on March 12 that dropped heavily on August 1, but there are some sites that dropped more on March 12 that dropped heavily on August 1. And then there are some sites that surged more on March 12 that increased on August 1. Here is an example of a site that reversed course after getting hit by the Medic update:

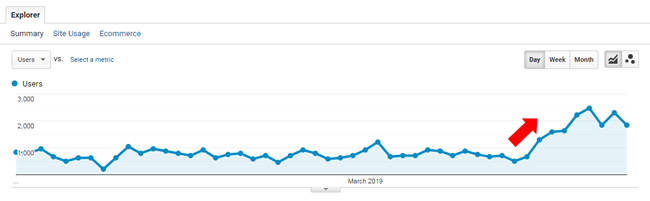

But, here are two sites seeing more movement in the same direction (they were either hit by Medic and dropping more on March 12, or surged during Medic and then gained more).

Also, it’s worth noting that there are many sites that saw some improvement on March 12 that had dropped heavily on August 1 (so they experienced a partial recovery and not a full one). And on the flip side, there are some sites that dropped on March 12 after surging in August, but didn’t drop all the way back down to their previous levels. For example, draxe.com surged back on March 12, but not all the way back. There are many sites with trending like that:

Side note: Since I’ve been analyzing the health/medical niche heavily since the August update, it was ultra-interesting to see “the big three” get knocked down a few notches. I’m referring to healthline.com, webmd.com, and verywellhealth.com. In particular, healthline.com and verywellhealth.com experienced some big drops in search visibility. That makes sense, since many other sites in health/medical surged during this update. Based on those surges, it’s only natural that the top three players who were dominating health queries would experience a drop as others see gains. But there may be more to those drops than just that. I’ll cover more about what I’m seeing soon after analyzing many sites that saw movement during this update.

For example, verywellhealth.com lost significant search visibility.

Algorithm Tinkering – Softening From The Medic Update & E-A-T Calculations

I mentioned the idea of a Medic reversal earlier in the post and how I don’t believe there was a full reversal. But that doesn’t mean Google couldn’t have softened some of the algorithms it used in the August update. Again, there were many sites seeing improvement during the March update that got hammered during the August update.

I was able to ask John Mueller this exact question during a recent webmaster hangout video. John gave a vague answer, but he did explain that Google sometimes goes too far with an update and needs to pull it back. He also said this can work the other way around, where Google didn’t go as far as they should, so they strengthen their algos.

Here’s the video of John explaining this (at 20:04 in the video):

A softening of the Medic Update is entirely possible. It was one of the biggest and baddest updates I have ever seen. There were extreme drops and surges in traffic everywhere I looked. And the health/medical niche saw the most volatility (hence the name Medic).

Every time I analyzed a site impacted by the August update, I couldn’t help but think the update had a unique and extreme feel. Some sites got slaughtered when it was hard to see why they would deserve dropping that much (70%+ for some sites). Anyway, the important part of this section is that Google could have tinkered with its algorithms to pull back some of the power of the Medic update. That’s entirely possible and could cause massive volatility when they do that.

That’s a good segue into the most scalable way for Google to tinker with trust, especially for Your Money or Your Life (YMYL) sites and content – I’m referring to links.

The Link Graph and Tinkering With Trust

After analyzing the Medic update, and then the September update, I explained that the best way for Google tinker with trust would be via the link graph. For example, if Google simply increased the amount of power of certain links, while decreasing the power of other links, then that could cause mass-volatility across the web (just like we saw with the August update).

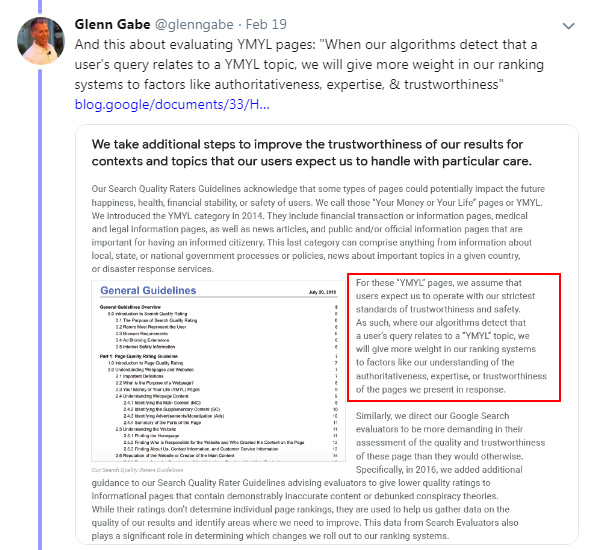

And that’s especially the case with YMYL sites. For example, queries that can “impact the future happiness, health, financial stability, or safety of users”. You can read Google’s Quality Rater Guidelines (QRG) for more information about YMYL sites.

After the Medic update, it was clear that YMYL sites were impacted heavily, and more heavily than other types of sites. After analyzing a number of sites and digging into their link profiles, you could see the gaps between certain sites that surged and dropped (from a link power perspective). For example, I found links from the CDC, CNN, and other extremely powerful domains for sites that surged when comparing to competing sites that dropped.

Let’s face it, you can’t just go out and gain links like that overnight. It led me to believe that Google could be tinkering with trust via the link graph. I included information about that in my post about the September 27, 2018 core update and in a separate LinkedIn post.

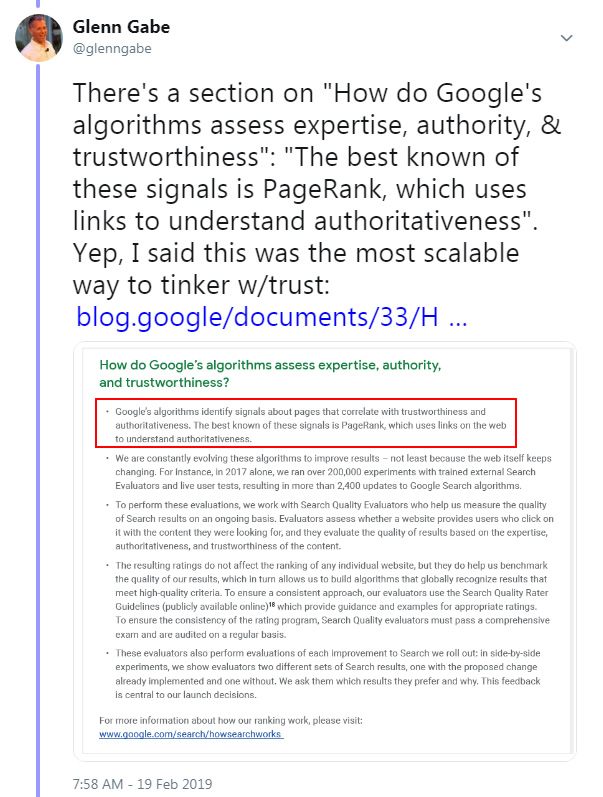

Beyond what I just explained, Google released a whitepaper recently that made this even clearer. That whitepaper revealed more information about how Google evaluates expertise, authoritativeness, and trust (E-A-T) algorithmically. And it supports my point about tinkering with trust via links.

Google explained that E-A-T is algorithmically evaluated by several factors, the best-known factor being PageRank (which uses links across the web to understand authoritativeness). Yes, that means Google can use links to evaluate E-A-T, which makes complete sense. Like I’ve said in the past, it’s the most scalable way to tinker with trust…

Going one step further, Google also explained that when it detects YMYL queries, it can give E-A-T more weight. So, that means the right links can mean even more when a query is detected as Your Money or Your Life (YMYL). This could very well be why many YMYL sites were heavily impacted by the August update (Medic). It’s just a theory, but again, makes a lot of sense.

Now with the March 12 update, we very could be seeing an adjustment to the algorithms that are used to evaluate E-A-T. Any sort of power adjustment to specific links can cause mass volatility (especially for YMYL queries). Remember, many YMYL sites saw a lot of movement during the March 2019 update, so it’s entirely possible. And as I explained above, Google’s John Mueller said that Google can dial-down certain algorithms if it believes it went too far.

Examples of Surges and Drops

I have a list of 165 domains that were impacted by the March 12 update, including a number of clients that saw movement (either up or down). I wanted to provide some examples of impact (both positive and negative), including findings based on those surges or drops. I can’t cover everything I’ve seen while analyzing sites that were impacted, but I did want to cover some extremely interesting situations.

Disclaimer: Before we begin, I have to provide my typical disclaimer regarding major algorithm updates. I do not work for Google. I don’t have access to its core ranking algorithm. I don’t have a hidden camera in the search engineers’ cafeteria in Mt. View, and I haven’t infiltrated Google headquarters like Tom Cruise in Mission Impossible. I have been to Google Headquarters in both California and New York, and it was tempting to crawl through the vents to find a computer holding all of Google’s algorithms, but I held back. :)

I’m just explaining what I’ve seen after analyzing many sites impacted by algorithm updates over time, and how that compares with what I’m seeing with the latest Google updates. I have access to a lot of data across sites, categories, and countries, while also having a number of sites reach out to me for help after seeing movement from these updates. Again, nobody knows exactly what Google is refining, other than the search engineers themselves.

Sites that surged:

Health/Medical Site – Taking The “Kitchen Sink” Approach To Remediation

The first site I’ll cover is in the health/medical niche that got hit hard during the Medic update in August of 2018. The site lost over 40% of its Google organic traffic overnight.

Once I dug into the situation, there were a number of problems I was surfacing. I worked with this client for over four months on surfacing all potential problems across the site, including content quality problems, user experience (UX) issues, aggressive monetization, a lack of author expertise for certain types of content, render and performance problems and more.

The site owners have worked hard to make as many changes as possible during the engagement, and they plan to tackle even more of them over time. Overall, a number of important changes were made to improve the quality of the site, increase E-A-T as much as they could, fix all technical SEO problems they could, decrease aggressive monetization, etc.

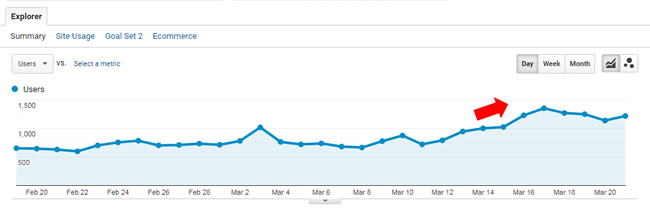

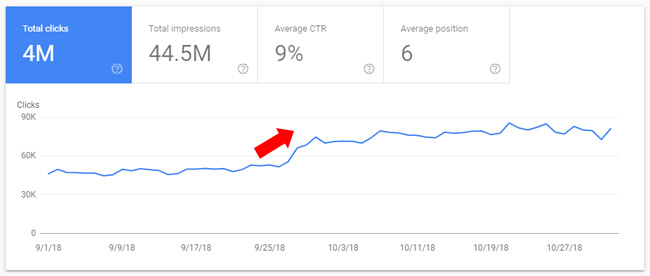

And then March 12 arrived, and the site began to surge. As of today, it’s up 72% since 3/13 (the first full day of the rollout). It’s a great example of taking a “kitchen sink” approach to remediation. Now, as mentioned earlier, it’s hard to say how much of the surge is based on remediation versus a softening of what rolled out in August (or some combination of both), but it’s hard to ignore all of the changes this site made since then.

E-commerce site surging – The Importance of Being Proactive Versus Reactive

Another client that surged is an e-commerce retailer selling a high-end line of products. I helped them a few years ago during medieval Panda days. They improved greatly during that time and their organic search traffic surged during 2015 and 2016. And they contacted me a few months ago after seeing declines during both the August and September updates in 2018. Unfortunately, the site had gone off the tracks slightly and they were experiencing a downturn in traffic.

So I dug into a crawl analysis and audit of the site and began surfacing any problems that could be hurting them. That included digging into their technical SEO setup, reviewing content quality, user experience (UX), performance, and more.

They tend to move fast with changes, so as I was sending findings through, they were addressing those problems very quickly. For example, there was a massive canonicalization issue across many of their category and product pages. In addition, there were some content problems riddling the site.

In particular, all of their category pages had the dreaded fluff descriptions that some e-commerce retailers provide. You know, forced content that doesn’t really help users… And to add insult to injury, most of those descriptions (which were very long), were partially hidden on the page. Users had to click “read more” to reveal the full description. It was pointless and was clearly there just for SEO purposes.

Even though John Mueller has repeatedly explained that e-commerce sites should NOT do this, and that they don’t need to do this, many still do employ this tactic. My client took a leap of faith and removed most of that content from every category page on the site (many). They replaced it with just a few helpful lines of copy that was crafted for the user. So about 80% of the text was removed from each description.

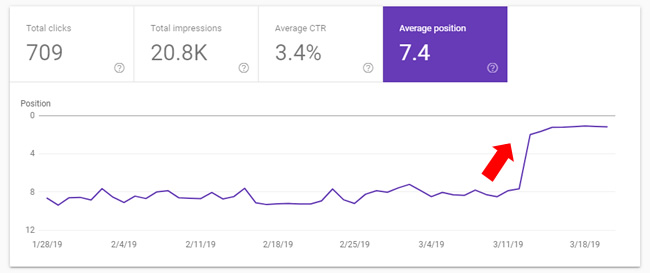

In total, they made a number of important changes on the site (beyond what I just explained). And on March 12, they began to surge. It was fascinating to watch a number of those category pages jump to #1 for their most competitive queries. Not #2 or #3…. But #1. And their average sale is in the thousands of dollars. Needless to say, they were excited to watch this happen. The site is up 57% since 3/13 (the first full day of the rollout).

B2C Content Site (including information and reviews) – A Competitive Battle

The next example is a battle between two competitors. I’ve helped one of the sites extensively that has experienced a decline over time. They have worked hard on improving the site overall, including nuking a lot of lower quality and thin content from the site, while also improving the user experience. They have experts producing content and have built a very strong brand over the years. Actually, it’s one of the strongest brands in its niche.

The other site has surged over the past few years and had overtaken my client in terms of search visibility. They are a relatively new brand in the space (think years versus decades for my client). Even though they have surged over the past two years, they have many problems across the site from a quality standpoint, which includes aggressive advertising problems, thin content, over-optimization, and more.

Starting in August, the competitor finally started to drop after years of increasing. And during the March 12 update, they dropped even more. Both sites have insanely powerful link profiles with millions of links each (and many from powerful sites in their niche). But my client has cleaned up many problematic things over the past year, while the competitor has all sorts of problems as mentioned above.

E-commerce retailer in a controversial niche – BBB vs. overall reputation

The next example is an interesting one, considering the recent focus on E-A-T. It’s an e-commerce retailer that’s in a controversial niche. It got smoked during the Medic update, almost getting cut in half visibility-wise. The site has an e-commerce store, but also a lot of educational information, tips, etc. There are thousands of user reviews for the site, with a high average rating. The reviews are handled by a third-party service.

During the latest update on March 12, the site absolutely surged. It has regained a good amount of visibility, although it’s not back to where it was prior to the Medic update.

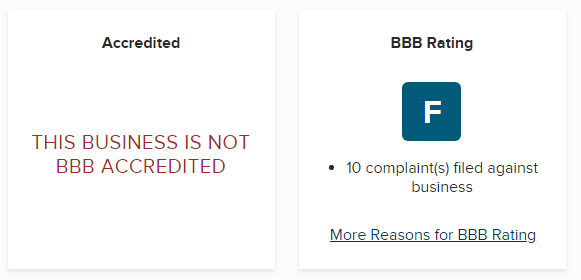

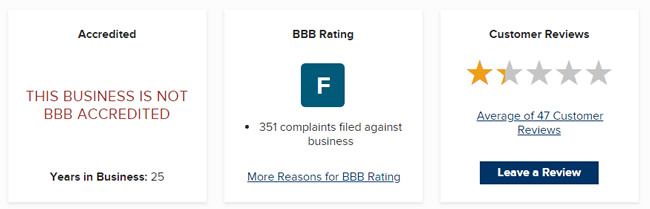

But there’s a reviews dichotomy here, which is interesting. Their user reviews are very strong, but their BBB profile is not. They have an F rating and there are a number of complaints that haven’t been addressed. I’ll touch on BBB ratings later as well, but it’s a great example of Google not using the hard BBB rating, but possibly evaluating reviews from across the web (which makes much more sense).

How-To Content (UGC) – Managing “Quality Indexation”, Improving Technical SEO, and Subdomain Impact

The next site is a large-scale site focused on how-to content that dropped during the Medic update. Since there’s a lot of user-generated content (UGC), there is always the danger of thin or low-quality content getting published at scale. The site has several subdomains targeting different countries.

Once digging into a crawl analysis and audit of the site, I surfaced many different issues across a number of important categories. For example, although there was a lot of 10X content (super high-quality), there was a lot of thin content and low-quality content mixed in. My client moved quickly to determine which pieces of content should be nuked from the site (by either 404ing or noindexing content). They have removed about 100K urls as of today.

Next, there were technical SEO issues that could be causing quality problems. For example, canonical issues, performance problems, some render issues, and more. This client moves very quickly, since their dev team is great. It’s not uncommon for me to send findings through and have them implement changes within a few days (or even quicker).

With the March 12 update, we initially thought the site didn’t see much of a gain. But the devil’s in the details. When you look at overall traffic or search visibility, the site didn’t move very much. But, when you check each subdomain, the trending tells a different story. The international subdomains experienced nice gains during the update, while other pieces of the site either remained stable (or even decreased slightly). It’s a great example of a broad core ranking update impacting sites at the hostname level. More about that next. Here’s how some of the subdomains looked after the March 12 update:

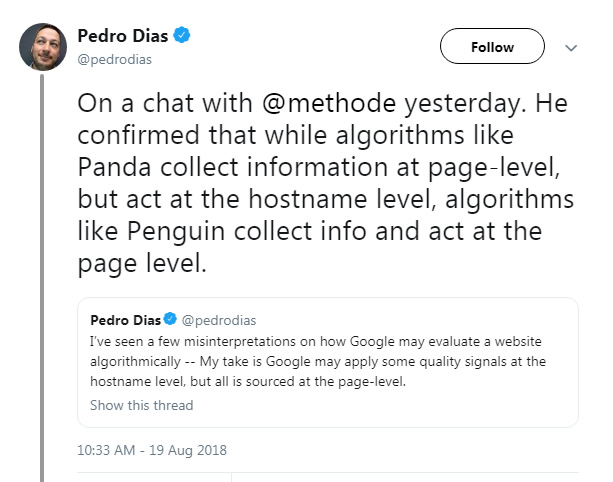

Side note about hostname-level impact: Reminder, broad core ranking updates can impact sites at the host level (like the subdomain example I just provided). If you’ve followed medieval Panda closely, then that should sound familiar. Here’s a tweet from Pedro Diaz explaining a conversation he had with Google’s Gary Illyes. Gary explained that algorithms like Panda collect data at the page-level, but act at the hostname-level. That’s exactly what I’ve seen across a number of sites that were impacted by these broad core ranking updates over time. It’s just an interesting side-note:

Examples of sites that dropped:

Large-scale YMYL Health/Medical – Being Proactive After Surging With Every Update Until March 12

The first example of a big drop is a YMYL site that had been doing extremely well in a very competitive niche. They reached out to me in the fall since they wanted to have the site audited to make sure they could avoid damage down the line. In other words, although they were surging, they didn’t really know why. And they wanted to make sure they continued doing well. So, they were proactively seeking SEO assistance versus reactively addressing a hit down the line. That’s always a smart approach.

I started analyzing the site in February and it didn’t take long to surface some very big problems. With every twist and turn, I was finding many issues including massive thin content problems, low-quality content, JavaScript render problems, canonical issues, and more. I literally couldn’t figure out why this site had been surging so much.

I brought this to my client’s attention very quickly and have reinforced that point several times. They did begin making some changes, but I mentioned that the next update could bring bad news (since I had a good feeling we were close to a big update… since we hadn’t seen a broad core ranking update since September of 2018).

And then March 12 arrived and the site got hit hard. As of today, the site is down 37% since March 13 (the first full day of the rollout). And when checking queries that dropped and their corresponding landing pages, they line up with the problems I have been surfacing. For example, thin content, empty pages, pages that had render issues, so on and so forth.

Large-scale Health Publisher – no E-A-T, republishing content, aggressive ads

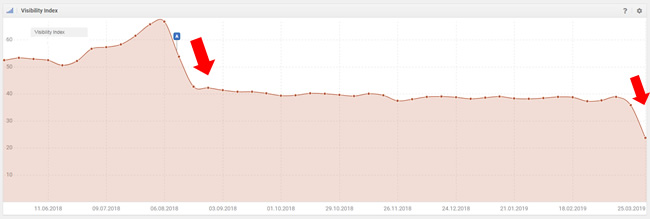

The next example is a YMYL site focuses heavily on health. It surged during the Medic Update and then even more during the September update.

But it just got crushed during the March 12 update. There are a number of problems across the site that are hard to ignore. First, much of the content has no author listed, so it’s impossible to know if it’s written by a doctor or a kid in high school. Author expertise is extremely important, especially for YMYL content.

Second, there’s an aggressive ad problem. There are low-quality ads all over the site, especially mega-loaded at the bottom of the page.

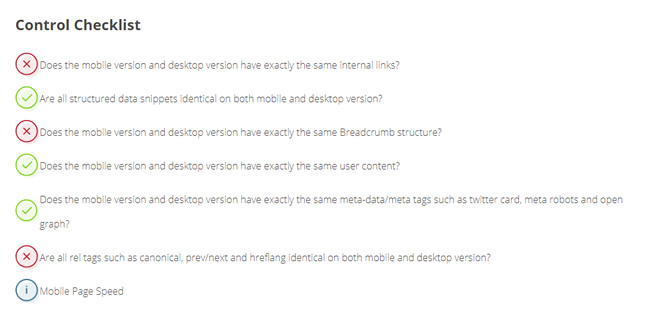

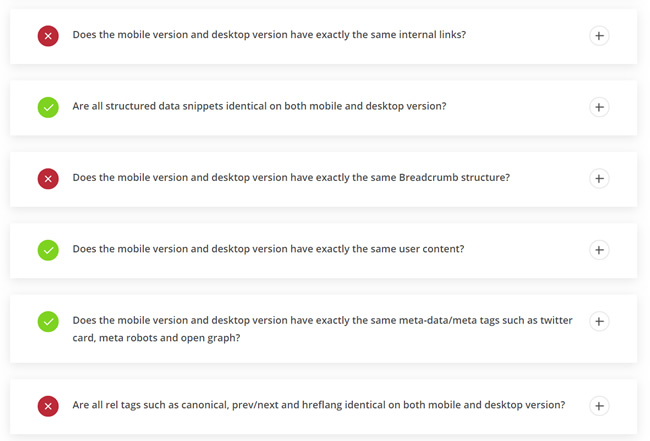

It’s also worth noting that the site uses an m-dot for its mobile content. With mobile-first indexing, it’s extremely important to make sure your m-dot contains the same content, directives, structured data, links, etc. as your desktop version. The mobile version is what Google is using for indexing and ranking purposes. There were definitely gaps between the desktop pages and mobile pages, so that could be contributing to the drop.

For example, here are some problems I surfaced when comparing mobile pages to desktop pages:

Also, and this is important, the site consumes a lot of syndicated content. I’ve mentioned problems with doing this on a large scale before and it seems this could be hurting the site now. Many articles are not original, yet they are published on this site with self-referencing canonical tags (basically telling Google this is the canonical version). I see close to 2K articles on the site that were republished from other sources.

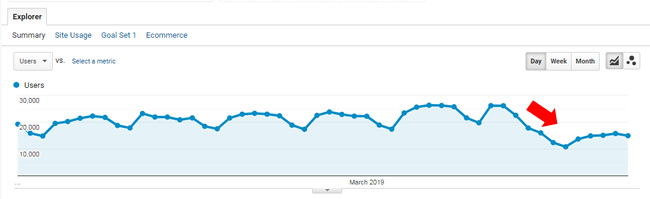

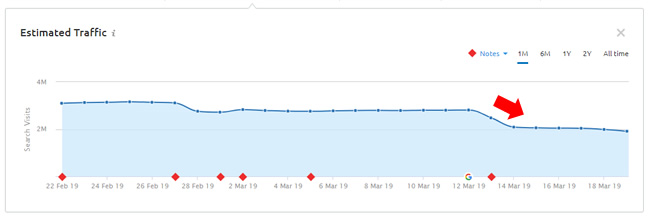

Here is what happened during the March 12 update. The site lost a significant amount of search visibility:

Niche Publisher (International site) – Aggressive and Deceptive Ads, Not Secure, Lacking Author Expertise

The next site I’ll cover is an international publisher focused on a very specific niche. The site was not affected by the Medic update in August, but surged like mad during the September update.

They increased even more since September and had surpassed 80K users per day. But March 12 ended up being a very bad day for them. The site dropped heavily losing 46% of its Google organic traffic since the update rolled out.

When reviewing the site, there were a few things that stood out immediately. First, there was a big aggressive advertising problem (with certain elements that were deceptive too). For example, the hero image for each article was a large ad right in a core area of the content. I’ll guarantee some users were clicking that image and being whisked off the site to the advertiser site. I’ve mentioned ads like this before many times in my articles about major algorithm updates. Hell hath no fury like a user scorned.

Next, there were aggressive ads weaved into the content. For example, accordion ads that were injected in between paragraphs. They were large and intrusive.

Beyond that, there is no author information at all. For each article published, users (and Google) have no idea who wrote the content. With Google always wanting to make sure they are sending users to the most authoritative posts written by people with expertise in a niche, having no author information is not a good idea.

And last, but not least, the site still hadn’t moved to https. Now, https is a lightweight ranking factor, but it can be the tiebreaker when two pages are competing for a spot in the SERPs. Also, http sites can turn off users, especially with the way Chrome (and other browsers) are flagging them. For example, there’s a “not secure” label in the browser. And Google can pick up on user happiness over time in a number of ways (which can indirectly impact a site rankings-wise). Maybe users leave quickly, maybe they aren’t as apt to link to the site, share it on social media, etc. So not moving to https can be hurting the site on multiple levels (directly and indirectly).

Large-scale Lyrics Website – UX Barriers, Aggressive, Disruptive, and Deceptive Ads + The Same Content As Many Other Sites

The next site I’ll cover is a large-scale lyrics site that has gotten hit by multiple algorithm updates over the years (including when medieval Panda roamed the web). And it had experienced significant volatility in 2018, where it dropped during the March 7, 2018 update, and then surged with the September 2018 update. It was clearly in the gray area of Google’s algorithms. Unfortunately, the site just got hit hard by the March 12 update, cutting some of the gains it made in September of 2018.

When reviewing the site, it was clear there were some problems. First, any site that provides the same exact content as other sites must provide some type of value-add. If not, you are leaving Google with a very hard decision when it’s presented with many options for users searching for the same content available on many sites across the web.

Actually, Google’s John Mueller just covered this again during a recent webmaster hangout video. He explained that if a site contains the same exact content as many others, then they should try to provide as much unique value as possible.

Here is the video (at 45:10 in the video):

This site does not provide a value-add. It’s literally the same lyrics content that’s available on many other sites. As an alternative, some sites provide song meanings, information about the artists, concert information, and more.

Second, there is ultra-aggressive advertising on the site. I’ve mentioned many times in the past the problems with aggressive and disruptive advertising and how sites that employ them often fare during major Google algorithm updates (especially when combined with other problems). This site contained deceptive ads in prominent areas of the content, which I’m sure is infuriating some users.

So, we have a site with aggressive, disruptive, and deceptive advertising, and the same exact content as many other sites on the web (without providing any value-add). The combination is clearly not working for them (as they have ridden the Google roller coaster for years – surging and dropping with many Google algorithm updates).

A Note About Reputation – Focus on overall reputation, not a single score.

I don’t want to spend too much time on this, since this post is already long. We know that Google doesn’t use hard BBB ratings when evaluating sites. I asked John Mueller about that a few months ago. But, that doesn’t mean Google is ignoring overall reputation across the web. There’s a big difference between the two.

After the March 12 update, I checked the ratings and reviews for a number of sites that surged or dropped and found that BBB ratings/reviews often did not correlate with surges or drops (meaning strong BBB ratings would lead to a surge and weak BBB ratings would lead to a decline). That said, overall reputation could be impacting those sites.

For example, I noticed that a large gaming company has a BBB rating of F with many complaints. They didn’t drop at all during this update. Overall, their games have been a massive hit with millions of users. And many of those users love the game (and have reviewed it, blogged about it, shared that across social media, and more). So, it’s a good example of site with an F rating from the BBB, but a different reputation across the entire web.

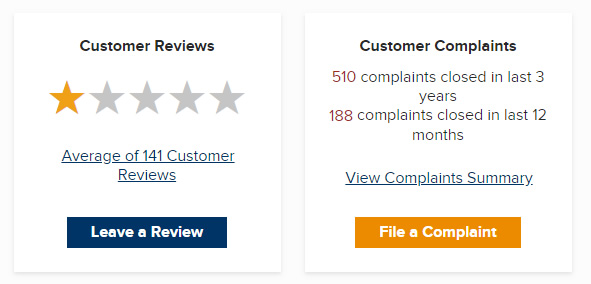

I also checked a huge e-commerce retailer, which surged during the March 12 update, even though it has 510 complaints via the BBB. But they have a huge following and a much different reputation overall across the web. This also leads me to believe that if Google is using reputation, they are doing so in aggregate and not using third-party scores or ratings.

This topic could yield an entire post… but I just wanted to mention reputation as it relates to these broad core ranking updates. In my opinion, I would focus on overall reputation (which is what you should be doing anyway). And even if you have specific third-party ratings that are low, that doesn’t mean those specific ratings will drag your site down if you have other positive signals across the web.

What Site Owners Can Do – The “Kitchen Sink” Approach To Remediation

My recommendations aren’t new. I’ve been saying this for a very long time. Don’t try to isolate one or two problems… Google is evaluating many factors when it comes to these broad core ranking updates. My advice is to surface all potential problems with your site and address them all. Don’t tackle just 20% of your problems. Tackle close to 100% of your problems. Google is on record explaining they want to see significant improvement in quality over the long-term in order for sites to see improvement. See my examples above for how that can work.

Here are some things you can do now (for improvement down the line). Again, this list should be familiar…

- Review and address content quality. Surface all thin or low-quality content and handle appropriately. That could mean boosting content that’s low-quality, 404ing that content, or noindexing it.

- Analyze your technical SEO setup and fix any problems you surface as quickly as you can. I’ve always said that what lies below the surface could be scary and dangerous SEO-wise. For example, canonical problems, render problems crawling problems, and more.

- Objectively analyze your advertising situation. Do you have any aggressive, disruptive, or deceptive ads? Nuke those problems.

- Are there user experience (UX) barriers on your site? Are you frustrating users? If so, remove all of the barriers and make your site easy to use. Don’t frustrate users. Remember, hell hath no fury like a user scorned.

- On that note, are you meeting and/or exceeding user expectations? Review the queries leading to your site and then the landing pages receiving that traffic. Identify gaps and fill them.

- Make sure experts are writing and reviewing your content (especially if you focus on YMYL topics). Google is clearly looking for expertise when it matches users with content.

- Build the right links, and not just many links. It’s not about the quantity, it’s about quality. We learned that Google (partly) evaluates E-A-T via PageRank, which is from links across the web. Use a strong content strategy along with a strong social strategy to build links naturally. When you do, amazing things can happen.

Summary – The March 12 Update Was Huge. The Next Is Probably A Few Months Away

Google only rolled out three broad core ranking updates in 2018. Now we have our first of 2019 and it impacted many sites across the web. If you’ve been impacted by the March update, it’s important to go through the steps I listed and look to significantly improve your site over the long-term. That’s what Google wants to see (they are on record explaining this).

Don’t just cherry pick changes to implement. Instead, surface all potential problems across content, UX, advertising, technical SEO, reputation, and more, and address them as thoroughly as you can. That’s how you can see ranking changes down the line. Good luck.

GG